The News: NVIDIA H100 Tensor Core GPUs once again set new AI computing performance records in the latest round of MLPerf Training v2.1 industry-standard benchmark tests, focusing this time on enterprise AI workloads. The newest MLPerf results for enterprise AI performance arrive two months after NVIDIA H100 Tensor Core GPUs set high marks in AI inferencing. In another accomplishment, NVIDIA’s older A100 Ampere core GPUs also boosted their performance in the latest MLPerf testing in the high performance computing (HPC) category. Read the full NVIDIA blog post on its latest MLPerf v2.1 AI performance test results.

NVIDIA H100 is Again Impressive on MLPerf AI Test Benchmarks

Analyst Take: NVIDIA H100 Tensor Core GPUs have done it again, continuing their performance dominance in the latest MLPerf industry-standard benchmark testing for AI computing. The latest benchmark testing, by the open source engineering consortium MLCommons, is important in the industry because it provides standards to evaluate AI inferencing performance when comparing GPUs, placing each one head-to-head while starting from an equal footing. The MLPerf testing is a respected yardstick where vendors can submit their chips and be compared to establish real-world AI performance leadership.

And when it comes to the power rankings in this testing, NVIDIA continues to stay in front of its competition, with the latest NVIDIA H100 Hopper GPUs delivering up to 6.7x more performance than previous-generation GPUs. This is a very impressive result in the last 2.5 years as NVIDIA originally developed, refined, and released its A100 and then followed up with its H100 GPUs. Even those older Ampere-based A100 chips are now 2.5x more powerful than they were when they were first released in 2020 due to software upgrades, but the newer H100 Tensor Core GPUs are now dominating the competition, according to the latest MLPerf tests.

Also notable in the latest tests is that the newest H100 Hopper GPUs excelled in the BERT testing model in the MLPerf benchmarks, which is a particularly large and challenging test in the MLPerf testing suite.

NVIDIA again entered its GPUs in each of the MLPerf tests across the benchmark suite, rather than just hand-picking the tests where its GPUs will perform best. By doing that, I believe this continues to be notable and impressive on NVIDIA’s part, to go in without blinders and see where things shake out.

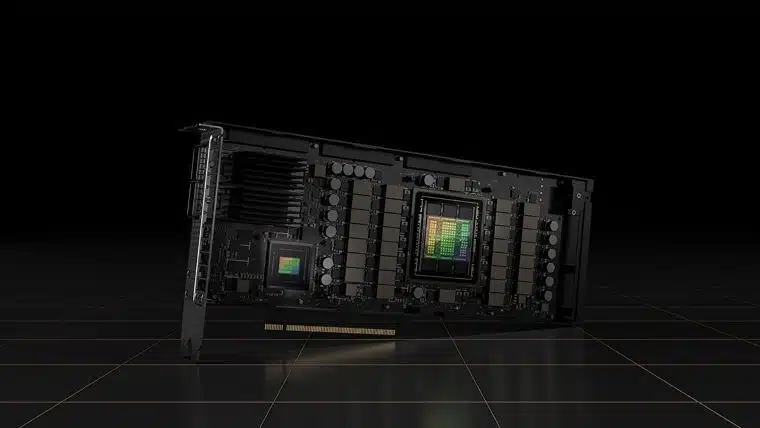

NVIDIA’s H100 GPUs include 80 billion transistors and are built on a TSMC 4nm process. They also include a new Transformer Engine that is as much as six times faster than previous versions, as well as a highly scalable, super-fast NVIDIA NVLink interconnect. The NVIDIA H100 Tensor Core GPU is the company’s first GPU based on its latest NVIDIA Hopper accelerated computing platform architecture that was unveiled in 2021 to replace the now three-year-old Ampere architecture.

What the MLPerf Tests Mean

The latest round of MLPerf AI training benchmarks testing brought together entries from NVIDIA and 11 partners, which included systems makers ASUS, Dell Technologies, Fujitsu, GIGABYTE, Hewlett Packard Enterprise, Lenovo and Supermicro. Overall, there were almost 200 results from 18 submitters using hardware that ranged from small workstations to large scale data center systems with thousands of processors.

By putting the GPUs through this demanding testing, the MLPerf benchmarks give enterprise buyers, researchers, and others detailed information on how the chips performed under heavy AI workloads. This information and data can be incredibly valuable to help them determine what hardware and software they should deploy and use for their own demanding AI models and workloads.

The MLCommons consortium includes AI leaders from academia, research labs, and industry. The consortium’s aim is to build fair and useful benchmarks that can produce unbiased evaluations of training and inference performance for hardware, software, and services under prescribed conditions. MLPerf does its testing at regular intervals and adds new workloads as needed to represent the state of the art in AI, according to the group. The benchmark suites are open source and peer reviewed.

According to the consortium, the MLPerf Training benchmark suite measures performance for training machine learning models used in commercial applications such as recommender systems, speech-to-text conversion, autonomous and semi-autonomous vehicles, medical imaging, and more.

MLPerf HPC Testing Also Shows the Staying Power of NVIDIA A100 GPUs

Meanwhile, in the latest MLPerf benchmark testing for HPC workloads, NVIDIA’s older A100 Tensor Core Ampere-based GPUs improved their performance over records they set in 2021. The MLPerf HPC benchmark suite is used with supercomputers and measures the time it takes to train machine learning models for scientific applications and related work. These workloads can include weather modeling, cosmological simulation, and predicting chemical reactions based on quantum mechanics.

NVIDIA Overview

For NVIDIA, the latest MLPerf testing results graphically show that the company is continuing its leadership and performance advantages in a marketplace where higher performance and broader capabilities are always the main selling point.

As NVIDIA increases its focus on the capabilities and power of AI, I believe it will help inspire and drive the future of this still-nascent technology across the consumer, enterprise, scientific, government, defense, and other markets. My view remains unchanged – NVIDIA continues to be an exciting company to watch in the AI marketplace around the globe and it will be fascinating to track its next moves in this always evolving field. I cannot wait to see what the company comes up with next in the world of AI, machine learning, and HPC.

Disclosure: Futurum Research is a research and advisory firm that engages or has engaged in research, analysis, and advisory services with many technology companies, including those mentioned in this article. The author does not hold any equity positions with any company mentioned in this article.

Analysis and opinions expressed herein are specific to the analyst individually and data and other information that might have been provided for validation, not those of Futurum Research as a whole.

Other insights from Futurum Research:

NVIDIA’s Jensen Huang Donates $50M for an OSU AI Tech Center

NVIDIA GTC 2022 Debuts New Omniverse Metaverse Tools