Analyst(s): Brendan Burke

Publication Date: March 13, 2026

NVIDIA has announced a $2 billion investment in AI cloud company Nebius as part of a strategic partnership to build hyperscale AI infrastructure. The collaboration focuses on AI factory design, inference, and large-scale data center deployment, aiming to deploy more than 5 gigawatts of NVIDIA systems by 2030.

What is Covered in This Article:

- NVIDIA’s $2 billion investment in Nebius and strategic partnership to build hyperscale AI cloud infrastructure

- Deployment of more than 5 gigawatts of NVIDIA systems by 2030 to support growing AI compute demand

- Collaboration on AI factory architecture, inference platforms, and data center infrastructure

- Broader context of NVIDIA’s increasing investments in AI infrastructure companies

The News: NVIDIA and Nebius Group announced a strategic partnership to develop and deploy the next generation of hyperscale AI cloud infrastructure. As part of the agreement, NVIDIA will invest $2 billion in Nebius and collaborate across AI factory design, inference platforms, infrastructure deployment, and fleet management to support growing demand for high-performance compute.

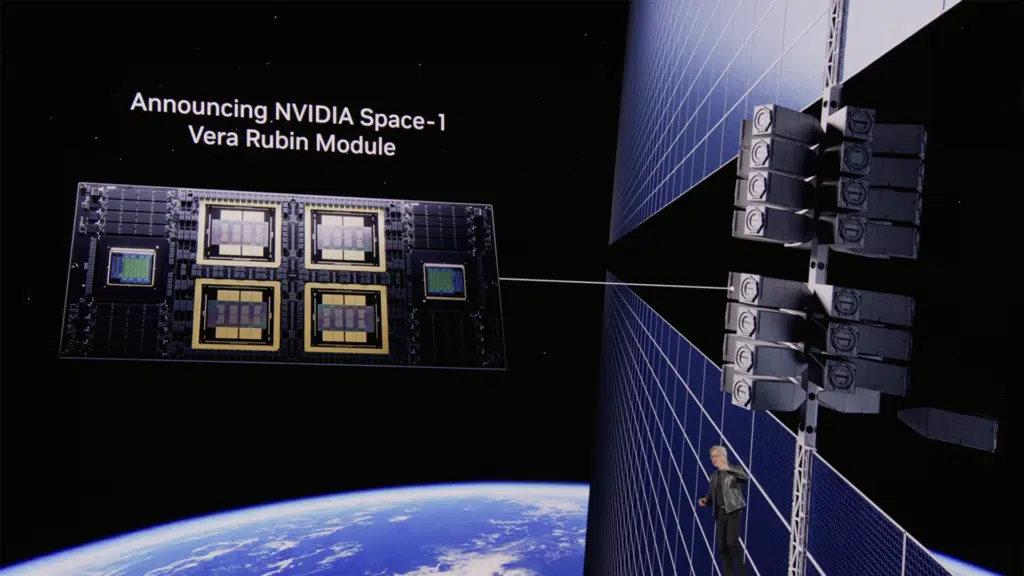

The partnership aims to enable Nebius to deploy more than 5 gigawatts of NVIDIA systems by 2030, supported by early access to NVIDIA’s accelerated computing platforms and architectures, including Rubin, Vera CPUs, and BlueField storage systems. The collaboration builds on Nebius’s ongoing deployment of NVIDIA infrastructure across global data centers, including gigawatt-scale AI factories in the United States.

Nebius Designs the Agentic Era of AI Cloud Platforms with NVIDIA Investment

Analyst Take — Nebius Moves Beyond the Neocloud Label into the Agentic Era: The NVIDIA investment validates Nebius’s expansion beyond bare metal infrastructure into managed inference and agentic AI services. The term “neocloud” was coined to differentiate newer infrastructure players from traditional hyperscalers, but it obscures important differences within this category. While some GPU cloud providers offer what amounts to manual cluster provisioning with multi-year rental commitments, Nebius has invested in building a software-defined platform where resources are API-accessible, scalable on demand, and abstracted from the complexity of physical infrastructure. This is a developer-centric approach that mirrors what made the original public cloud revolution successful, but purpose-built for AI workloads.

Nebius’s approach is validated by its customer roster. The company has secured multi-billion-dollar infrastructure deals with Microsoft and with Meta Platforms, positioning it alongside CoreWeave as one of the most significant AI-focused infrastructure providers outside the hyperscaler tier. Futurum’s AI Cloud Platforms Signal Report identified Nebius as one of the prominent NVIDIA-certified cloud partners deploying Blackwell-class infrastructure globally, including in the UK alongside Nscale.

Expansion of AI Factories Built on a Five-Layer Cake

The partnership between NVIDIA and Nebius centers on the co-design of AI factories. The companies will collaborate on AI factory architecture, including access to partner design materials, system reviews, early hardware samples, and system software support. This engineering collaboration extends across the full technology stack, from data center design to production software supporting AI workloads. Nebius is already deploying NVIDIA infrastructure globally, including gigawatt-scale AI factories in the US. By combining capital investment with technical collaboration, the agreement aims to accelerate Nebius’s buildout of large-scale AI compute capacity. The partnership positions AI factory infrastructure as an area of competitive differentiation through assembling the full five-layer cake of energy, chips, infrastructure, models, and applications.

Token Factory’s Shift to Inference Economics

The establishment of Nebius Token Factory represents the company’s most strategically significant product decision to date. Rather than selling pure compute, Token Factory delivers managed inference endpoints where the SLA is defined by token throughput, latency, and cost. This distinction matters because the economics of AI are shifting decisively from training to inference. Futurum’s Q1 2026 AI Platforms State of the Market report confirms this dynamic, noting that “organizations that fail to model inference costs accurately will face budget surprises as they scale production deployments, particularly for reasoning-intensive applications.”

Inference is a unit economics game. Every token generated carries a marginal cost, and for many AI product companies, the business operates on thin margins where inference cost determines whether growth is economically viable. Token Factory’s value proposition is rooted in Nebius’s full-stack control. By owning the physical infrastructure layer and the software optimization stack, Nebius can execute multi-layered optimizations that pure infrastructure or pure software providers cannot. Through the acquisition of Tavily, Token Factory offers the ability to build agentic applications based on open-source reasoning models with access to live internet data, enabling the deployment of enterprise-grade agents. This capability aligns with NVIDIA’s view that AI-generated tokens are productive and profitable for both customers and cloud providers after the Agentic AI inflection point.

NVIDIA’s Expanding Investments in AI Infrastructure Companies

The Nebius investment fits into a broader pattern of NVIDIA investing in companies building AI infrastructure and deploying its systems. NVIDIA recently invested $2 billion in CoreWeave, another AI-focused cloud provider, and contributed $30 billion to a recent funding round for OpenAI. The company also participated in a $2 billion funding round for UK-based Nscale and has announced additional strategic partnerships with Lumentum and Coherent. CEO Jensen Huang has emphasized the concept of AI factories, where the output is tokens. The Nebius partnership—with its emphasis on early access to Rubin, Vera CPUs, and BlueField systems—extends this strategy by ensuring that the next generation of NVIDIA architectures is deployed at scale through a partner with deep software engineering capabilities.

The collaboration on inference optimization is particularly significant. NVIDIA’s Dynamo, which supports disaggregated serving and efficient inference routing, aligns naturally with Nebius’s Token Factory platform. Joint engineering on inference stacks could yield platform differentiation that makes Nebius infrastructure more efficient per token generated. Some observers have raised concerns that investments in companies that purchase NVIDIA systems could create circular financing dynamics. The underwriting of positive unit economics suggests that these investments can have compounding returns.

What to Watch:

- Whether the Token Factory inference platform delivers measurable improvements in customer unit economics, particularly for AI product companies operating at the edge of profitability, where inference cost determines the viability of growth.

- The depth and speed of the Tavily integration into the broader Nebius stack, and whether the resulting agentic development environment achieves the cohesion needed to compete with hyperscaler agentic AI offerings from AWS, Azure, and Google Cloud.

- Whether inference stack collaboration with NVIDIA yields observable platform differentiation for developers, particularly through joint optimization of Dynamo and Token Factory serving infrastructure.

- How neocloud competitive dynamics evolve as supplier relationships deepen and the open-source model ecosystem continues to narrow the frontier gap with closed-source providers.

- The 5 GW deployment trajectory: Tracking Nebius’s actual infrastructure deployment against the ambitious 2030 target will provide the clearest measure of the partnership’s operational success.

See the full press release on NVIDIA’s news announcement on the company website.

Disclosure: Futurum is a research and advisory firm that engages or has engaged in research, analysis, and advisory services with many technology companies, including those mentioned in this article. The author does not hold any equity positions with any company mentioned in this article.

Analysis and opinions expressed herein are specific to the analyst individually and data and other information that might have been provided for validation, not those of Futurum as a whole.

Other Insights from Futurum:

NVIDIA Q4 FY 2026 Earnings Highlight Durable AI Infrastructure Demand

Can Micron’s Modular Memory Upgrade Help NVIDIA’s CPUs Outperform?

Does Nebius’ Acquisition of Tavily Create the Leading Agentic Cloud?

Author Information

Brendan is Research Director, Semiconductors, Supply Chain, and Emerging Tech. He advises clients on strategic initiatives and leads the Futurum Semiconductors Practice. He is an experienced tech industry analyst who has guided tech leaders in identifying market opportunities spanning edge processors, generative AI applications, and hyperscale data centers.

Before joining Futurum, Brendan consulted with global AI leaders and served as a Senior Analyst in Emerging Technology Research at PitchBook. At PitchBook, he developed market intelligence tools for AI, highlighted by one of the industry’s most comprehensive AI semiconductor market landscapes encompassing both public and private companies. He has advised Fortune 100 tech giants, growth-stage innovators, global investors, and leading market research firms. Before PitchBook, he led research teams in tech investment banking and market research.

Brendan is based in Seattle, Washington. He has a Bachelor of Arts Degree from Amherst College.