The latest media appearances by Futurum Analysts.

For most of its life, Cursor has been an IDE. A very good one. But with the public beta of the Cursor SDK, the company is making a different kind of move — one that should get the attention of DevOps teams.

Building AI agents sounds straightforward until you actually do it. You need an agent to onboard a new employee. It has to create an Entra ID account, provision GitHub access, spin up cloud resources, create tasks in Azure DevOps, and send a welcome message in Teams. Five tools. Five different authentication models. Five different teams are managing those tools.

Most AI coding tools do one thing well: Help developers write code faster. IBM wants to go further than that.

Arm this week made available a free toolkit for analyzing agentic artificial intelligence (AI) workloads as they are being developed by DevOps and platform engineering teams.

Anthropic has launched Claude Security in public beta for Claude Enterprise customers. The tool gives security teams a way to scan entire codebases for vulnerabilities — and generate targeted patches — without the usual back-and-forth that slows down remediation.

Intel has seen demand for Xeon servers outplace supply and its business mix shift toward AI during the first three months of the year.

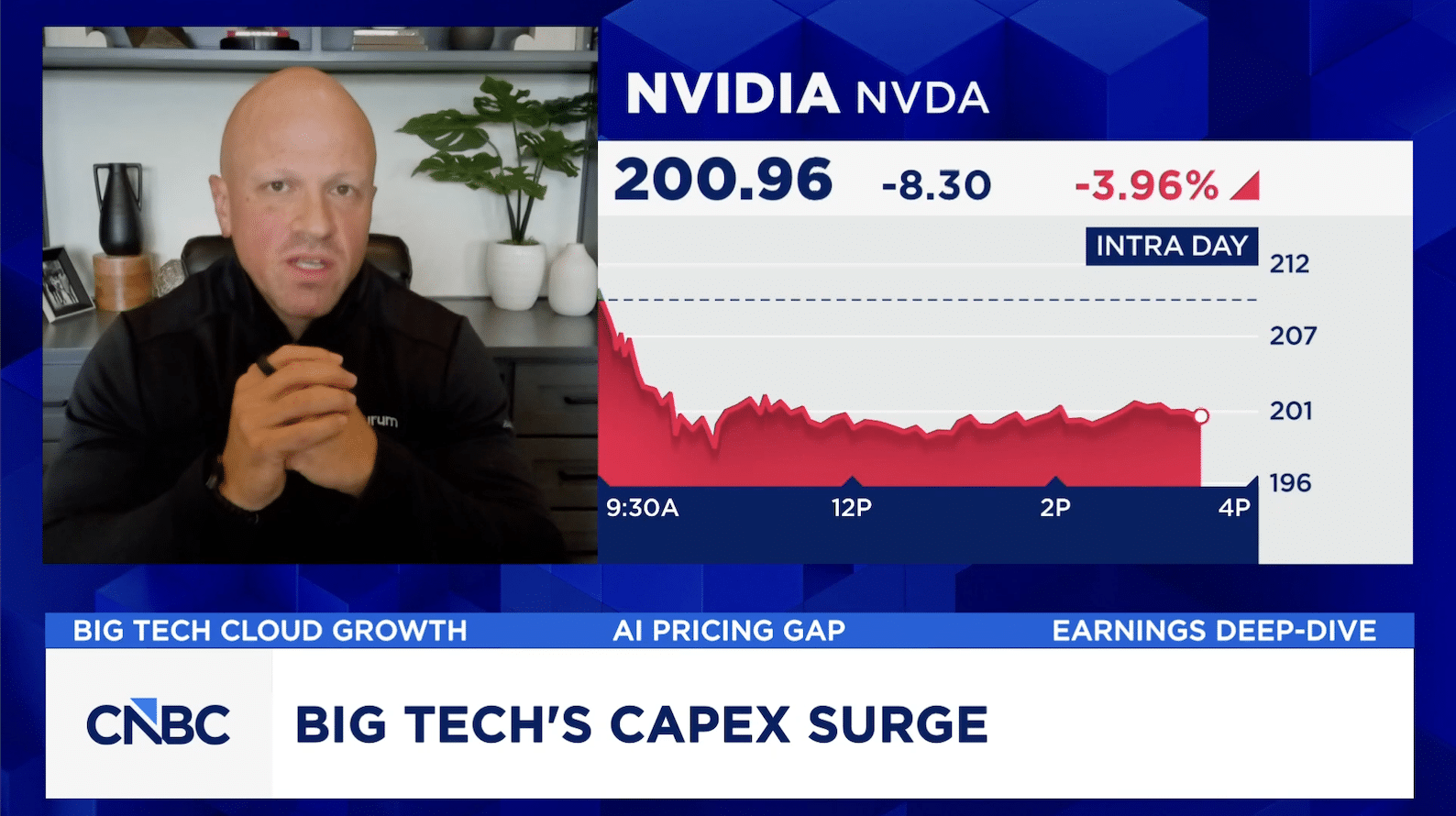

Olivier Blanchard breaks down Apple’s (AAPL) earnings setup as the company approaches a leadership transition and refocuses on hardware innovation. He argues Apple’s measured, late‑mover approach to AI helps it avoid costly capex pitfalls facing other big tech firms, while preserving flexibility through partnerships. Blanchard also highlights the growth of services, the iPhone 17 cycle, and Apple’s push into more affordable Macs as key drivers of long‑term value.

Microsoft, Google, Amazon, and Meta reported earnings last night — three upped already-giant capex commitments. Cloud numbers justify the spend, but free cash flow is collapsing across the group, and Meta shows how fragile that bargain is. Daniel Newman of Futurum Group breaks it down. Plus: AI is getting pricier in America while Chinese alternatives — DeepSeek, Kimi, and others — run at a fraction of OpenAI and Anthropic’s cost. Futurum CEO, Daniel Newman on the gap and what enterprises are doing.

Get important insights straight to your inbox, receive first looks at eBooks, exclusive event invitations, custom content, and more. We promise not to spam you or sell your name to anyone. You can always unsubscribe at any time.