Analyst(s): Brendan Burke

Publication Date: April 2, 2026

Starcloud recently raised a $170 million in Series A funding at a $1.1 billion valuation to scale its space-based computing infrastructure, becoming the fastest Y Combinator startup to reach unicorn status. This significant financial backing, coupled with new orbital hardware initiatives from NVIDIA and SpaceX, strongly validates the transition of hyperscale AI from terrestrial grids to low Earth orbit.

What is Covered in This Article:

- Starcloud secured $170 million in Series A funding, led by Benchmark and EQT Ventures, and reached a $1.1 billion valuation just 17 months after its Y Combinator demo day.

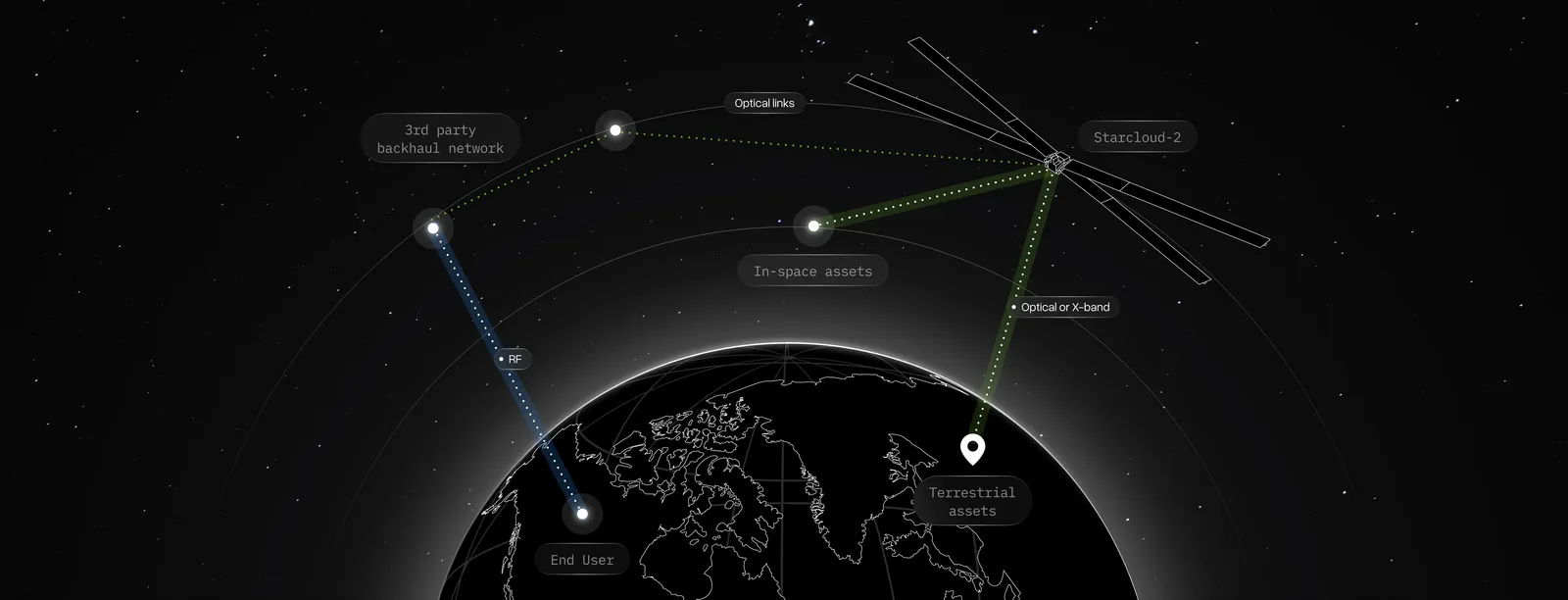

- The new capital will accelerate the manufacturing of Starcloud’s orbital data centers, including the Starcloud-2 satellite, which will feature NVIDIA Blackwell GPUs and run commercial workloads.

- SpaceX CEO Elon Musk introduced the Terafab initiative, outlining a vision to build a terawatt of compute power in space using the Starship rocket’s massive payload capacity.

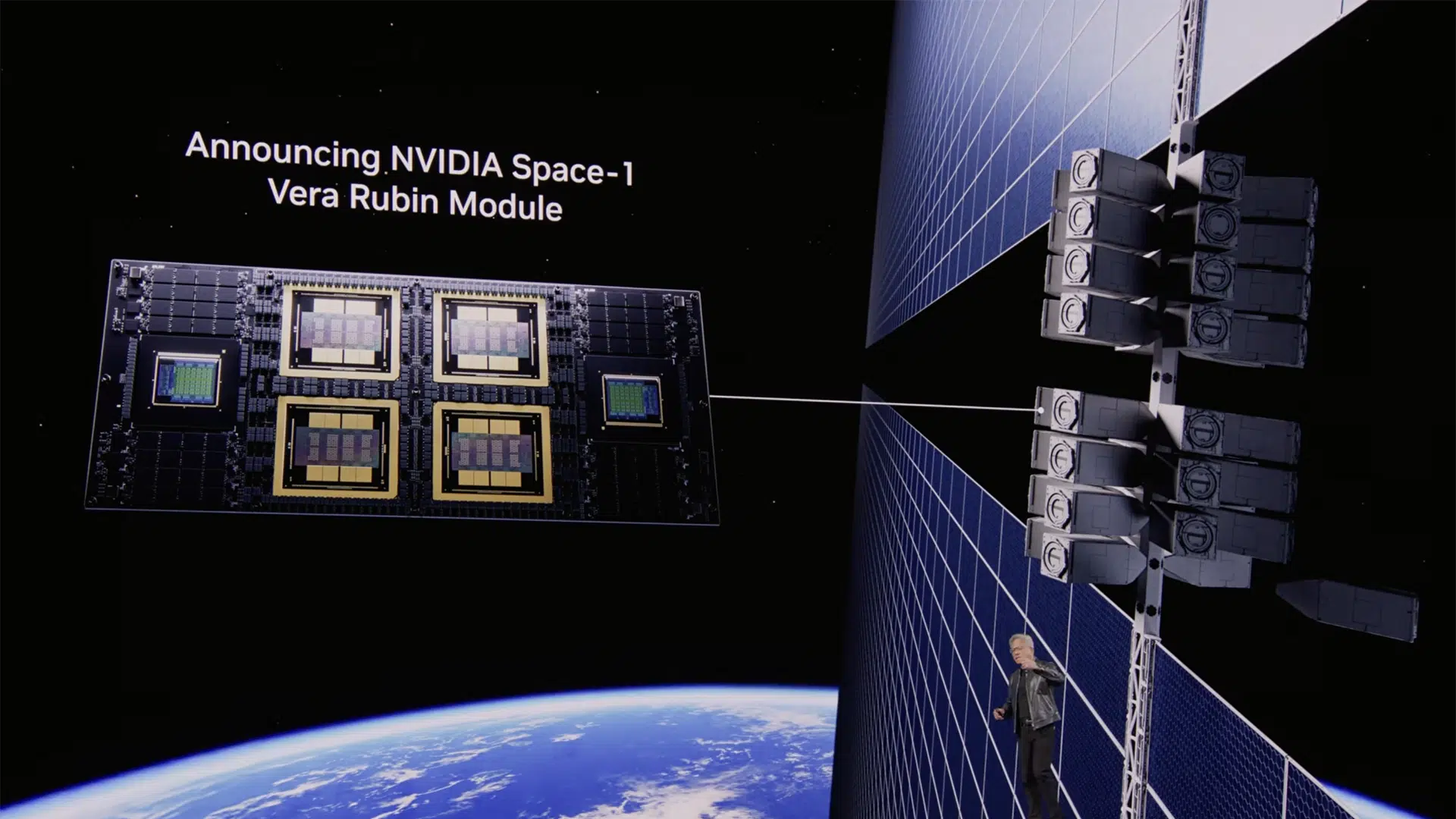

- NVIDIA launched its Space-1 Vera Rubin Module and IGX Thor platforms, explicitly engineered for data-center-class AI hardware in size-, weight-, and power-constrained orbital environments.

- NVIDIA specifically highlighted Starcloud as a partner working to bring hyperscale-class AI computing directly to orbit, enabling real-time data processing at the source.

The News: Starcloud has secured $170 million in Series A funding at a $1.1 billion valuation in a heavily oversubscribed round led by Benchmark and EQT Ventures. This milestone makes Starcloud the fastest startup in Y Combinator history to achieve unicorn status. The funding brings the company’s total capital raised to $200 million and will be used to establish a dedicated manufacturing facility, expand headcount, and procure future launch contracts. The capital influx will accelerate the development of the company’s upcoming Starcloud-2 and Starcloud-3 satellites. Starcloud-2 is set to launch later this year and will feature NVIDIA Blackwell B200 chips to run commercial edge and cloud workloads for customers, including Crusoe, AWS, and Google Cloud. This news aligns with NVIDIA’s recent unveiling of the Space-1 Vera Rubin Module for space-based inferencing, and SpaceX’s announcement of Terafab, a strategic effort to deploy a terawatt of compute in orbit.

Will Starcloud’s Orbital Data Centers Solve NVIDIA’s Terrestrial Energy Crisis?

Analyst Take: Starcloud’s Series A firmly establishes orbital data centers as a critical frontier in AI infrastructure. Led by blue-chip VC Benchmark and real-estate-adjacent EQT Ventures, this investment highlights a growing consensus that the terrestrial energy grid cannot sustain the exponential power demands of artificial intelligence. By leveraging space-based compute, orbital data centers bypass the multi-year permitting delays and land constraints plaguing Earth-bound projects.

A Freezing Cold Take on the AI Energy Crisis

The mainstream narrative assumes the AI energy crisis will be solved by a renaissance in terrestrial nuclear power and grid modernization. This is a fundamentally flawed assumption. The terrestrial data center market is fighting a losing battle against physics and bureaucracy. Starcloud CEO Philip Johnston views that a new 100-megawatt energy project on Earth requires a 5- to 10-year lead time just for land and environmental permitting. Musk echoes this sentiment, noting that as we use up the “easy spots” for power generation on Earth, development becomes increasingly difficult and expensive due to “NIMBY” (Not In My Backyard) resistance.

Orbital data centers bypass these bottlenecks entirely. Space eliminates land permitting, and because satellites can be placed in sun-synchronous orbits, they receive near-continuous sunlight without the need for battery backup systems. As a result, space solar can be 8 times more efficient than terrestrial solar. Starcloud CEO Philip Johnston notes that while the marginal cost of building data centers on Earth continually rises, the marginal cost in space declines as launch capacity scales and manufacturing rates increase. Musk goes so far as to predict that the cost of deploying AI in space will drop below terrestrial costs in just 2 to 3 years. The future of hyperscale AI may be in Low Earth Orbit.

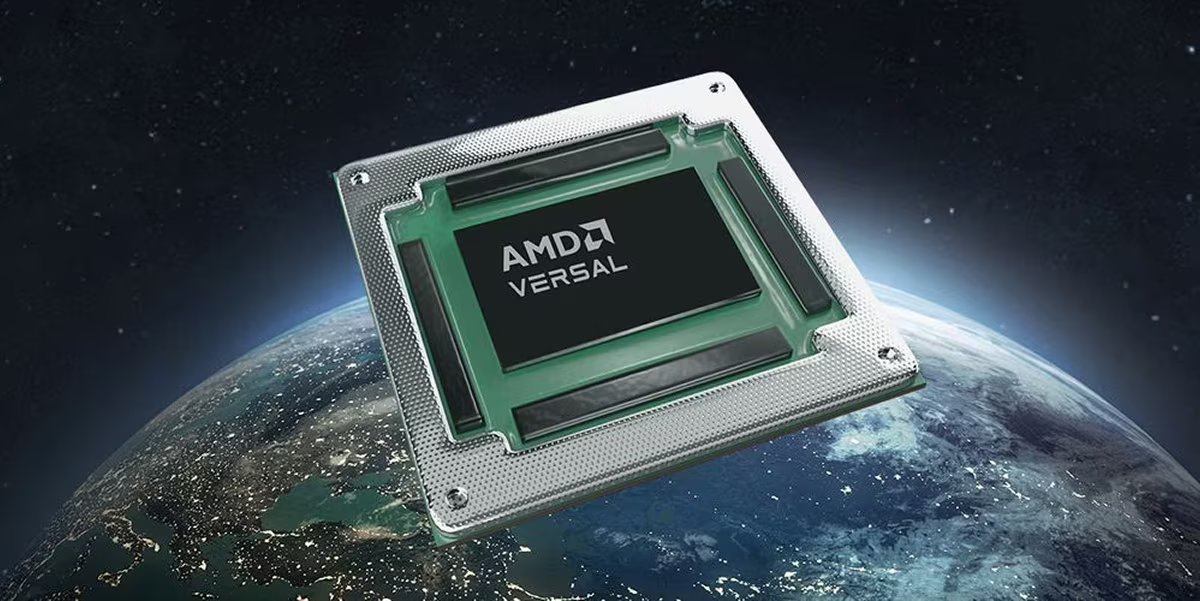

Validating the Tech Giants’ Astral Visions

While the orbital data center market is nascent, advanced silicon has been operating in the cosmos for years. AMD’s space-grade FPGAs have powered critical navigation and sampling instruments for over twenty years, including on the NASA Perseverance Mars rover. Blue Origin is using its Versal adaptive SoCs to develop flight computers for its Mark 2 lunar lander, while NASA’s NISAR mission relies on AMD technology to process massive volumes of synthetic aperture radar (SAR) data directly on board, bypassing the constraints of Earth-bound data transmission.

Starcloud’s rapid ascent to unicorn status provides undeniable market validation for the ambitious space roadmaps recently laid out by NVIDIA and SpaceX. NVIDIA announced its Space-1 Vera Rubin Module, engineered to deliver data-center-class AI inference in size-, weight-, and power-constrained environments. NVIDIA CEO Jensen Huang noted that intelligence must live wherever data is generated, specifically naming Starcloud as a partner in bringing hyperscale AI to orbit. Simultaneously, SpaceX CEO Elon Musk unveiled the Terafab initiative, a joint effort to build a terawatt of compute power in space. Musk emphasized that scaling civilizational power requires going to space, targeting 10 million tons to orbit per year using the Starship launch vehicle.

The Economics of Orbital Data Centers

Skeptics frequently point to the high launch costs and the thermal challenges of operating in a vacuum. However, Starcloud has already proven that commercial-off-the-shelf silicon can survive and thrive in space. Their StarCloud 1 module, launched in November 2025, successfully operated an NVIDIA H100 GPU in orbit, demonstrating AI model training and inference without a single restart failure from the chip itself. Looking ahead, future iterations like StarCloud 2 will feature massive low-cost, low-mass deployable radiators, effectively solving the vacuum heat dissipation problem.

As SpaceX’s Starship vehicle drives down the marginal cost of launch, the economics of orbital data centers will flip. On Sequoia Capital’s Training Data podcast, Johnston estimated that the launch cost break-even point to beat these terrestrial expenses is around $500 per kilogram for GPU payloads. However, as the cost of permitted land on Earth continues to skyrocket, that break-even threshold is actually moving closer to $1,000 per kilogram. Once launch costs drop below a few hundred dollars per kilo, building in space becomes the undisputed cheaper option. Starcloud estimates that orbital facilities will become cost-competitive with terrestrial data centers as soon as Starship is flying frequently, which is expected for commercial payloads by mid-to-late 2028.

The Strategic Imperative for Orbital Infrastructure

Hyperscalers and AI developers who ignore the transition to orbital data centers risk being severely constrained by terrestrial power limits. With EQT Ventures—a firm whose parent owns over 70 terrestrial data centers—co-leading Starcloud’s Series A, traditional infrastructure players are clearly hedging their bets. Johnston projects that within 10 years, close to a trillion dollars per year in capital expenditure will be deployed into space-based compute. The next era of AI scaling will not be defined by terrestrial real estate, but by early movers securing the best orbits and highest launch cadences for their orbital data centers.

What to Watch:

- The deployment and operational success of Starcloud-2, which is slated to feature NVIDIA Blackwell chips and run commercial workloads for major hyperscalers.

- The launch cadence and payload capacity of SpaceX’s Starship which is the critical catalyst required to make the economics of orbital data centers viable at gigawatt scale.

- Advancements in space-to-ground data transmission, such as optical laser mesh networks, are necessary to prevent data bottlenecks between orbital data centers and terrestrial users.

- Regulatory shifts or orbital real estate competition as more traditional terrestrial data center operators seek prime sun-synchronous orbits to deploy their own orbital data centers.

See the complete press release on Starcloud Raises $170M Series A at $1.1bn Valuation Led by Benchmark and EQT Ventures on the Business Wire website.

Disclosure: Futurum is a research and advisory firm that engages or has engaged in research, analysis, and advisory services with many technology companies, including those mentioned in this article. The author does not hold any equity positions with any company mentioned in this article.

Analysis and opinions expressed herein are specific to the analyst individually and data and other information that might have been provided for validation, not those of Futurum as a whole.

Other Insights from Futurum:

NVIDIA GTC 2026 Day 1 – Can NVIDIA’s Ecosystem Accelerate the Inference Inflection?

Will NVIDIA’s Meta Deal Ignite a CPU Supercycle?

Is Tesla’s Multi-Foundry Strategy the Blueprint for Record AI Chip Volumes?

Image Credit: NVIDIA

Author Information

Brendan is Research Director, Semiconductors, Supply Chain, and Emerging Tech. He advises clients on strategic initiatives and leads the Futurum Semiconductors Practice. He is an experienced tech industry analyst who has guided tech leaders in identifying market opportunities spanning edge processors, generative AI applications, and hyperscale data centers.

Before joining Futurum, Brendan consulted with global AI leaders and served as a Senior Analyst in Emerging Technology Research at PitchBook. At PitchBook, he developed market intelligence tools for AI, highlighted by one of the industry’s most comprehensive AI semiconductor market landscapes encompassing both public and private companies. He has advised Fortune 100 tech giants, growth-stage innovators, global investors, and leading market research firms. Before PitchBook, he led research teams in tech investment banking and market research.

Brendan is based in Seattle, Washington. He has a Bachelor of Arts Degree from Amherst College.