Analyst(s): Brendan Burke

Publication Date: April 9, 2026

Anthropic TPU expansion with Google and Broadcom highlights rising compute demand, long-term infrastructure commitments, and the growing importance of custom silicon in AI market competition.

What is Covered in This Article:

- Anthropic signed a multi-gigawatt TPU compute agreement with Google and Broadcom, with capacity coming online from 2027.

- The deal expands Anthropic’s prior TPU collaboration and supports rising demand for Claude models, with revenue surpassing $30 billion.

- Broadcom benefits from long-term supply visibility, reinforcing its position in custom AI chips and optical networking.

- Google deepens its TPU ecosystem, while Anthropic maintains a multi-platform strategy across AWS, Google Cloud, and Microsoft Azure.

- The Futurum Signal on AI Accelerators positions Google’s TPU platform as the gold standard for linear scale-out efficiency while identifying ecosystem alignment and go-to-market execution as areas requiring improvement.

The News: Anthropic announced a new agreement with Google and Broadcom to secure multiple gigawatts of next-generation TPU compute capacity, expected to come online starting in 2027. The deal expands Anthropic’s prior TPU collaboration and supports growing demand for its Claude models, with the majority of infrastructure to be deployed in the US as part of its broader $50 billion compute investment commitment.

Anthropic’s demand has accelerated significantly, with run-rate revenue surpassing $30 billion from approximately $9 billion at the end of 2025, and over 1,000 enterprise customers now spending more than $1 million annually. The agreement also strengthens Broadcom’s role in supplying custom AI chips for Google TPUs, with estimates indicating approximately 3.5 gigawatts of compute capacity tied to the partnership.

The 300 Gigawatt Path

Dario Amodei has laid out a vision in which the AI industry builds roughly 100 gigawatts of compute capacity by 2028 and potentially 300 gigawatts by 2029 in a podcast with Dwarkesh Patel. He suggests that Anthropic will contribute a significant share of those capacity additions, subject to demand. Reaching those numbers requires solving two simultaneous bottlenecks: chip supply and power infrastructure. The Google-Broadcom partnership directly addresses the first and most technically constrained of the two.

Broadcom may account for approximately 10% of TSMC’s revenue in 2026. Applied to projected advanced node capacity by 2030, when TSMC’s combined leading-edge output across 3nm, 2nm, and sub-2nm nodes could reach 400,000–500,000 wafer starts per month, this allocation could imply 40,000–50,000 wafer starts per month for Broadcom’s designs. At current AI accelerator die sizes and yields, producing one megawatt of GPU-class silicon requires roughly 48 logic wafers plus equivalent packaging capacity.

At 50,000 wafer starts per month, Broadcom’s TSMC allocation could theoretically support approximately 12–13 gigawatts of annualized AI accelerator production, comprising a significant portion of a market-leading compute portfolio. Broadcom’s CEO Hock Tan has publicly targeted more than $100 billion in AI revenue by 2027, and multi-year supply commitments of this nature provide the demand signal required to justify continued capacity expansion at TSMC. In effect, Anthropic’s commitment helps pull forward the very manufacturing capacity it needs.

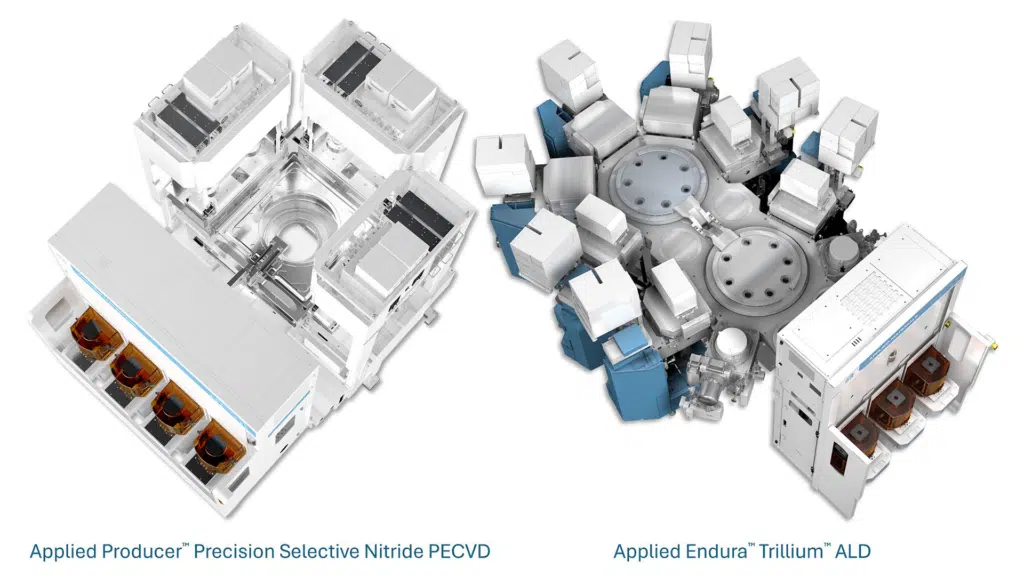

Anthropic’s Software-Silicon Feedback Loop with Google

The partnership’s deepest strategic advantage lies not in raw capacity but in the tight integration between Anthropic’s model architecture and Google’s TPU hardware-software stack. Google’s Ironwood (TPUv7) architecture features co-packaged optics and a 10x performance leap for agentic workloads. The integration between Ironwood’s optics and mixture-of-experts model architectures is specifically designed to improve inference efficiency compared to general-purpose hardware. The forthcoming Zebrafish (TPUv8) architecture will extend this advantage.

Anthropic’s partnership with Google since 2022 has created a software-silicon feedback loop developed over generations. Anthropic can co-optimize Claude’s inference and training workloads against TPU-specific hardware features, while Google can tune future TPU generations against the demands of one of the world’s most commercially successful model families. The Futurum Signal on AI Accelerators identifies Google’s TPU platform as “the gold standard for linear scale-out efficiency,” supported by a vertically integrated model-to-silicon stack. Anthropic is the first major external customer to exploit this stack at scale.

More critically, OpenAI only recently formed a partnership with Google. The software collaboration between Anthropic and Google, which enables workload-specific hardware optimization, is a moat that OpenAI cannot swiftly close, given the engineering challenges of deploying frontier models on heterogeneous hardware. This is not a gap that can be closed through procurement. It requires a partnership architecture that puts OpenAI behind.

TPU Ecosystem Strengthens Broadcom’s and Google’s Position

Broadcom’s role in this partnership extends beyond chip design. Broadcom also contributes optical interconnects that enable up to a 9,216 accelerator world size. This end-to-end role means Broadcom is not a contract manufacturer for individual TPU chips but the integration layer for Google’s entire AI cluster architecture. Its customer base for custom XPU design now includes Google, Meta, OpenAI, ByteDance, xAI, and Apple, but the multi-year nature of the Anthropic-Google agreement provides a level of revenue visibility that spot procurement cannot match. These agreements strengthen Broadcom’s TPU partnership and support company’s expectations of AI-related revenue exceeding $100 billion by 2027.

For Google, securing an external anchor customer generating over $30 billion in annual revenue provides the commercial validation needed to transition TPU from a primarily internal consumption platform to a commercial-grade infrastructure offering for elite AI labs. Futurum’s Signal report notes that Google’s current “customer experience model is oriented toward technical early adopters requiring engineering resources for implementation,” and that “successfully democratizing this powerful hardware through an enhanced software ecosystem will be critical for achieving broader market penetration.” The Anthropic deal represents a concrete step in this direction, expanding TPU’s external customer base beyond Google’s own Gemini models.

Multi-Platform Strategy Reflects Infrastructure Hedging

Anthropic continues to deploy Claude across AWS Trainium, Google TPUs, and NVIDIA GPUs, maintaining a multi-platform strategy. Amazon remains its primary cloud provider and training partner. This diversification deliberately provides workload optimization flexibility and insulates Anthropic from single-vendor dependency.

However, the Google-Broadcom agreement represents a qualitative shift. TPU capacity at this scale requires deep integration between model software and hardware architecture. Anthropic is effectively making a strategic bet that TPU-optimized inference will deliver cost and performance advantages for agentic workloads that general-purpose hardware cannot match. The multi-platform approach hedges risk while the TPU commitment captures upside for agentic inference at scale.

Competitive Implications

The deal reframes the frontier AI competition along a new axis. The question is no longer simply who has the best model or the most GPUs. It is the one who has the deepest integration between model architecture, custom silicon, and advanced semiconductor manufacturing capacity. Anthropic, through Google and Broadcom, has assembled a vertically aligned compute supply chain with TSMC advanced packaging, Broadcom’s custom silicon design, and Google’s TPU architecture and co-packaged optics.

Each link in this chain is supply-constrained and relationship-dependent. Replicating it requires not just capital but partnerships that present structural barriers to competitors. In a world where computing availability is the gating factor for AI growth, the distinction between buying capacity and co-designing it may prove decisive.

What to Watch:

- Deployment timelines for the multi-gigawatt TPU capacity starting in 2027, and whether scaling aligns with demand growth

- How deeply Anthropic integrates Claude’s mixture-of-experts architecture with Ironwood’s co-packaged optics to achieve inference efficiency gains versus GPU-based competitors

- Whether Microsoft accelerates its Maia custom silicon program or deepens NVIDIA commitments in response to the structural advantage this deal creates for Anthropic

- The extent to which Broadcom’s TSMC allocation becomes a competitive moat for Broadcom and its customers.

- Whether OpenAI pursues its own custom silicon partnership, and with whom, given that the Google pathway is closed and Intel’s foundry capabilities remain unproven at frontier scale

See the complete press release on Anthropic’s partnership with Google and Broadcom for next-generation TPU capacity on the Anthropic website.

Disclosure: Futurum is a research and advisory firm that engages or has engaged in research, analysis, and advisory services with many technology companies, including those mentioned in this article. The author does not hold any equity positions with any company mentioned in this article.

Analysis and opinions expressed herein are specific to the analyst individually and data and other information that might have been provided for validation, not those of Futurum as a whole.

Other Insights from Futurum:

Broadcom Q1 FY 2026 Earnings Driven by XPU Momentum

Alphabet Q4 FY 2025 Highlights Cloud Acceleration and Enterprise AI Momentum

Broadcom’s DSP Launch Intensifies the AI Optics Race with Marvell

Author Information

Brendan is Research Director, Semiconductors, Supply Chain, and Emerging Tech. He advises clients on strategic initiatives and leads the Futurum Semiconductors Practice. He is an experienced tech industry analyst who has guided tech leaders in identifying market opportunities spanning edge processors, generative AI applications, and hyperscale data centers.

Before joining Futurum, Brendan consulted with global AI leaders and served as a Senior Analyst in Emerging Technology Research at PitchBook. At PitchBook, he developed market intelligence tools for AI, highlighted by one of the industry’s most comprehensive AI semiconductor market landscapes encompassing both public and private companies. He has advised Fortune 100 tech giants, growth-stage innovators, global investors, and leading market research firms. Before PitchBook, he led research teams in tech investment banking and market research.

Brendan is based in Seattle, Washington. He has a Bachelor of Arts Degree from Amherst College.