Analyst(s): Brendan Burke

Publication Date: April 20, 2026

Meta has expanded its partnership with Broadcom to co-develop multiple generations of MTIA AI chips, scaling to multi-gigawatt deployments. The move reflects a broader shift toward custom silicon and system-level optimization for large-scale AI workloads.

What is Covered in This Article:

- Meta and Broadcom expand their partnership to co-develop multiple generations of MTIA custom AI chips.

- The agreement includes an initial deployment exceeding 1GW, with a broader multi-gigawatt rollout planned.

- Broadcom will support chip design, advanced packaging, and Ethernet-based networking for AI clusters.

- MTIA chips are positioned for inference, recommendation systems, and generative AI workloads at scale.

- The partnership reflects Meta’s portfolio approach to AI silicon, aligning specific accelerators to workloads.

The News: Meta has expanded its partnership with Broadcom to co-develop multiple generations of its Meta Training and Inference Accelerator (MTIA) chips, which support AI workloads across its platforms. The agreement includes an initial deployment exceeding 1GW of custom silicon, forming the first phase of a broader multi-gigawatt rollout aimed at supporting large-scale AI infrastructure.

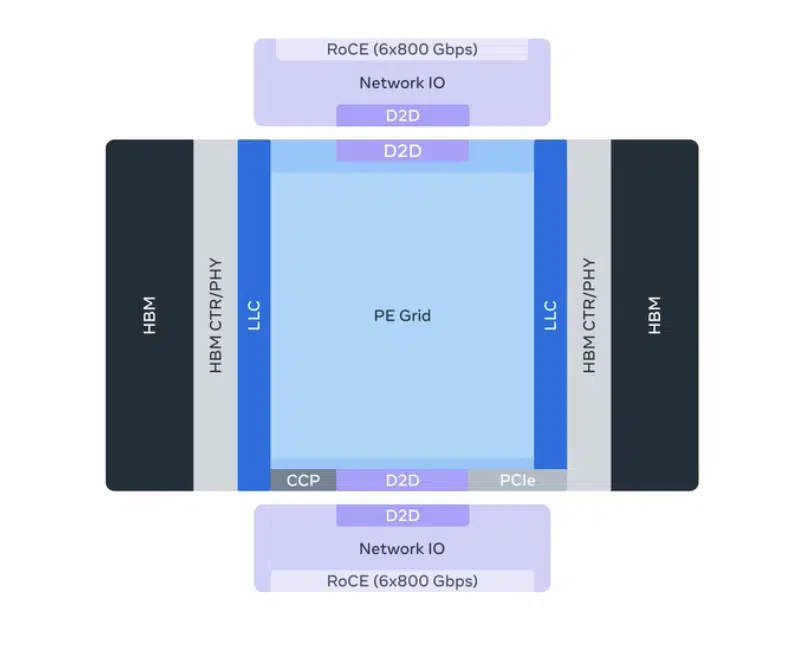

Broadcom will work with Meta across chip design, advanced packaging, and networking, leveraging its XPU platform and Ethernet technologies to enable high-bandwidth connectivity across AI compute clusters. The collaboration is expected to extend through multiple silicon generations, supporting inference, recommendation systems, and generative AI workloads as Meta scales its infrastructure.

Meta’s MTIA Partnership With Broadcom Solidifies the Future of XPUs in Inference Optimization

Analyst Take: Meta positions MTIA as a purpose-built accelerator for inference and recommendation workloads, while continuing to rely on a portfolio approach to AI silicon. The partnership also integrates Ethernet-based networking to support high-bandwidth AI clusters at scale. This MTIA partnership reflects Meta’s effort to align infrastructure design with workload-specific optimization.

Multi-Generation MTIA Roadmap Reflects Sustained Custom Silicon Investment

Meta is developing and deploying four new generations of MTIA chips within the next two years, targeting recommendation systems and generative AI workloads. The roadmap establishes a continuous pipeline of silicon iterations rather than a one-time deployment. Broadcom’s XPU platform enables tight coupling of logic, memory, and high-speed I/O across these generations, supporting long-term co-design.

Meta continues to follow a portfolio approach to AI silicon, aligning specific accelerators to distinct workloads rather than relying solely on GPUs. MTIA is designed primarily for inference and recommendation workloads, which are more predictable and easier to optimize at scale. This aligns with enterprise behavior, where inference is becoming the operational focus rather than model training. According to Futurum’s 2H 2025 Data Center Semiconductors Decision Maker Survey, inference is becoming the center of gravity for AI investment as enterprises prioritize scaling inference workloads efficiently. This positioning confirms that MTIA plays a targeted role in improving cost per inference rather than replacing broader compute architectures.

System-Level Optimization Shifts Focus to Networking and Interconnects

The expanded partnership extends beyond silicon into system-level infrastructure, particularly networking and packaging. Broadcom provides Ethernet-based networking technologies to enable scale-up, scale-out, and scale-across connectivity across AI clusters. This reflects a shift where bottlenecks are increasingly tied to data movement and interconnect performance rather than raw compute. Broadcom’s strength in networking, including high-speed SerDes and switching technologies, positions it to address these constraints at scale. According to Futurum’s 2H 2025 Data Center Semiconductors analysis, AI networking revenue grew 60% year-over-year and represented one-third of total AI revenue for Broadcom, underscoring the importance of connectivity in AI infrastructure.

Multi-Gigawatt Deployment Highlights Physical Infrastructure Constraints

The agreement includes an initial deployment exceeding 1GW, forming the first phase of a broader multi-gigawatt rollout. This scale introduces constraints beyond silicon, including power delivery, cooling, and network architecture. As deployments expand, efficiency metrics such as performance per watt and cost per inference become critical decision factors. According to Futurum’s 2H 2025 Data Center Semiconductors Decision Maker Survey, physical constraints such as power, cooling, and interconnect limitations have overtaken budget as the primary barrier to scaling AI infrastructure. This indicates that scaling MTIA successfully depends as much on infrastructure design as on silicon capability.

What to Watch:

- Multi-gigawatt deployments introducing constraints in power delivery, cooling, and network architecture as infrastructure scales

- How MTIA’s focus on inference workloads limits its role relative to GPUs in training environments.

- How Ethernet-based networking sustains low-latency performance across expanding AI compute clusters

- Whether custom silicon strategies will deepen AI workload optimization across multiple generations to deliver cost and efficiency gains

See the complete press release on the expanded partnership between Meta and Broadcom to support MTIA deployment on the Broadcom website.

Declaration of generative AI and AI-assisted technologies in the writing process: This content has been generated with the support of artificial intelligence technologies. Due to the fast pace of content creation and the continuous evolution of data and information, The Futurum Group and its analysts strive to ensure the accuracy and factual integrity of the information presented. However, the opinions and interpretations expressed in this content reflect those of the individual author/analyst. The Futurum Group makes no guarantees regarding the completeness, accuracy, or reliability of any information contained herein. Readers are encouraged to verify facts independently and consult relevant sources for further clarification.

Disclosure: Futurum is a research and advisory firm that engages or has engaged in research, analysis, and advisory services with many technology companies, including those mentioned in this article. The author does not hold any equity positions with any company mentioned in this article.

Analysis and opinions expressed herein are specific to the analyst individually and data and other information that might have been provided for validation, not those of Futurum as a whole.

Other Insights from Futurum:

Anthropic’s Google-Broadcom Deal: Model Company or Infrastructure Play?

Anthropic’s Gigawatt-Scale TPU Deal with Broadcom Creates a Structural Advantage

Will NVIDIA’s Meta Deal Ignite a CPU Supercycle?

Author Information

Brendan is Research Director, Semiconductors, Supply Chain, and Emerging Tech. He advises clients on strategic initiatives and leads the Futurum Semiconductors Practice. He is an experienced tech industry analyst who has guided tech leaders in identifying market opportunities spanning edge processors, generative AI applications, and hyperscale data centers.

Before joining Futurum, Brendan consulted with global AI leaders and served as a Senior Analyst in Emerging Technology Research at PitchBook. At PitchBook, he developed market intelligence tools for AI, highlighted by one of the industry’s most comprehensive AI semiconductor market landscapes encompassing both public and private companies. He has advised Fortune 100 tech giants, growth-stage innovators, global investors, and leading market research firms. Before PitchBook, he led research teams in tech investment banking and market research.

Brendan is based in Seattle, Washington. He has a Bachelor of Arts Degree from Amherst College.