Analyst(s): Tom Hollingsworth

Publication Date: March 23, 2026

NVIDIA and T-Mobile, along with several partners, are integrating physical AI applications onto AI RAN-ready infrastructure. This signals a pivot from cloud-centric AI to distributed, network-embedded intelligence. The move isn’t just about faster inference at the edge. It’s a shot across the bow for hyperscalers and telcos clinging to legacy architectures. At stake is who owns the future of real-time, location-aware AI services, and can NVIDIA’s ecosystem muscle overcome the inertia of the status quo?

What is Covered in This Article:

- NVIDIA and T-Mobile’s push to embed AI directly into network infrastructure

- Shifts in power dynamics between hyperscalers, telcos, and edge AI vendors

- Execution risks tied to security, interoperability, and developer adoption

- Contrarian view on the real bottleneck: not hardware, but operational trust

- Competitive implications for AWS, Google, and Microsoft’s edge strategies

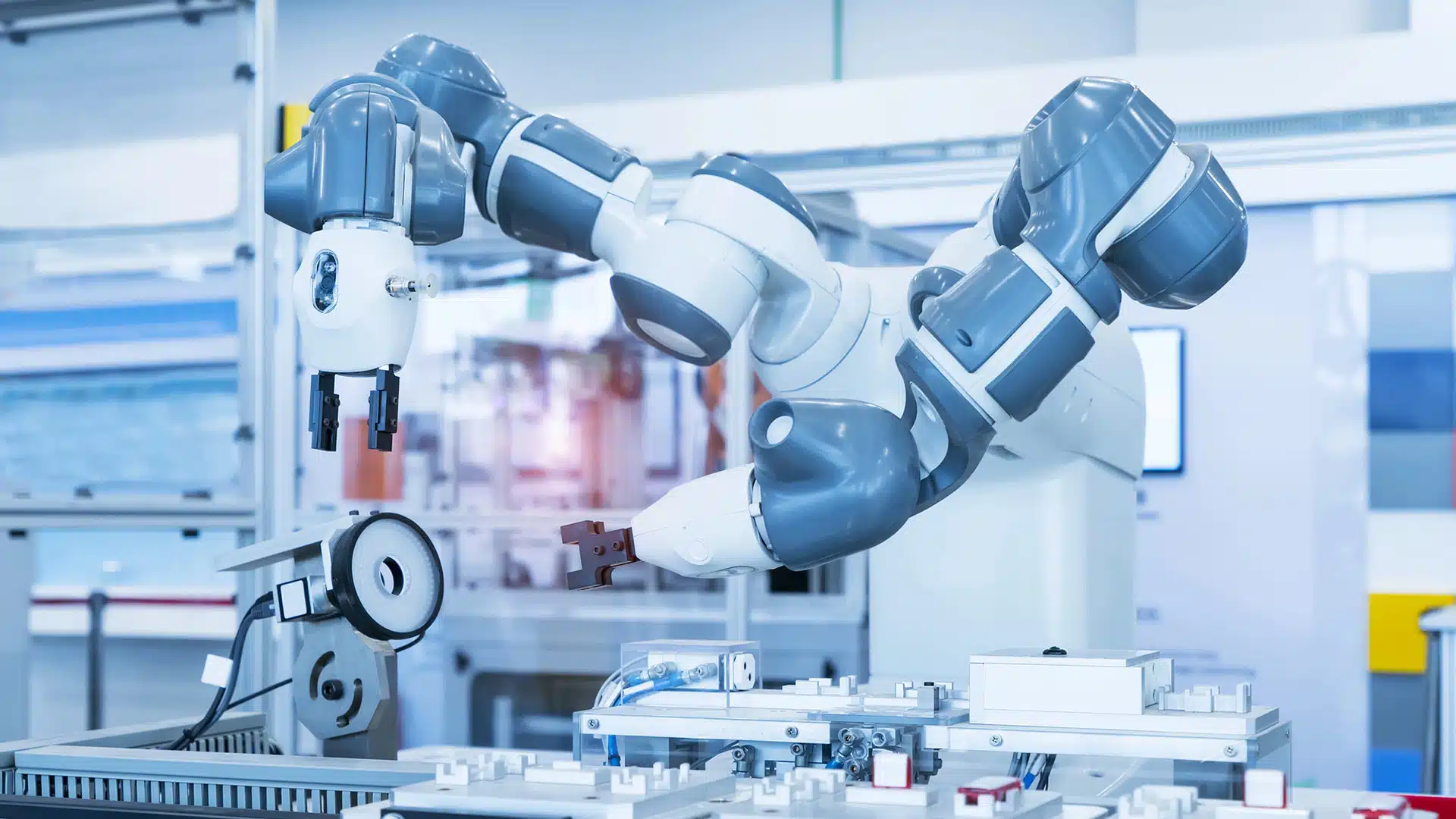

The News: NVIDIA, T-Mobile, and a coalition of partners have announced the integration of physical AI applications onto AI RAN-ready infrastructure, moving AI workloads out of centralized data centers and directly into the fabric of mobile networks 1. The collaboration promises lower latency, improved reliability, and the ability to run advanced AI models closer to where data is generated—think autonomous vehicles, industrial robotics, and real-time video analytics. By leveraging T-Mobile’s expansive 5G network, NVIDIA GPUs, and partner software stacks, the alliance aims to accelerate enterprise adoption of edge-native AI. This is more than a technical milestone. It’s a strategic bet that the next stage of AI value creation will happen at the network edge, not just in hyperscale clouds. Futurum’s 1H 2025 AI Platforms Decision Maker Survey found that 55% of organizations are still in research or pilot phases for agentic AI, but those who have deployed edge AI report significant ROI gains. The move puts pressure on competitors, such as AWS Wavelength, Google Distributed Cloud, and Azure Edge Zones, to prove that their edge offerings aren’t just cloud extensions but robust, real-time AI platforms in their own right.

Does NVIDIA’s Physical AI Gambit with T-Mobile Redraw the Edge Compute Map?

Analyst Take: This announcement is less about boxes and chips and more about a power grab for where AI gets to live and who controls the value chain. NVIDIA and T-Mobile are making a play to move AI out of the safe, climate-controlled walls of the data center and into the wild, where work happens. That’s the factory floors, intersections, cell towers, and everywhere latency matters.

Edge AI: The New Battleground for Platform Dominance

The structural shift here is unmistakable. By embedding AI directly into RAN infrastructure, NVIDIA and T-Mobile are asserting that the future of AI isn’t limited to massive training runs in the cloud. It’s about inference and decision-making at the point of action. This move threatens the traditional power base of hyperscalers, who have long depended on keeping enterprise AI workloads in their walled gardens. For telcos, it’s a rare shot at platform relevance beyond connectivity. If NVIDIA’s model sticks, we’ll see a new axis of competition. It won’t be limited to who has the best AI model, but who can operationalize it at the edge with reliability, security, and scale. The implication for enterprises is that your AI strategy can’t be cloud-only. Ignore edge-native architectures, and you’ll be locked out of the next wave of real-time, revenue-generating applications.

Execution Risk: Security, Interoperability, and the Trust Gap

Moving AI out of the data center isn’t just a technical migration. It’s an operational minefield. Futurum’s Enterprise Data Survey shows 78% of CIOs flag security, compliance, and data control as the top barriers to scaling AI. Running models in the wild means more attack surfaces, more regulatory scrutiny, and more complexity in orchestration. Interoperability is another headache. With every vendor pushing their own flavor of edge stack, the risk of fragmentation is real. If NVIDIA and T-Mobile can’t convince enterprises and ISVs that their platform is open, secure, and future-proof, adoption will stall at the pilot stage. The challenge isn’t hardware performance. It’s trust. Enterprises will demand validated reference architectures, ironclad SLAs, and evidence that these edge AI deployments won’t become tomorrow’s security headlines.

The Contrarian Take: The Real Bottleneck Isn’t Silicon, It’s Operations

Everyone obsesses over chip performance, but the real limiting factor for edge AI is operational maturity. Futurum’s research shows that only 8.8% of enterprises cite agentic features as a top-3 selection criterion, even as vendors race to tout ‘autonomous everything.’ The bottleneck is process, not silicon. Who manages updates, monitors drift, patches vulnerabilities, and ensures compliance when inference happens across hundreds or thousands of sites? If NVIDIA and T-Mobile can’t answer these questions better than the hyperscalers, they’ll win a few high-profile pilots and lose the war for mainstream adoption. The industry’s dirty secret: most enterprises still lack the operational discipline to manage distributed AI at scale. Until that changes, edge AI will remain the domain of the bold and the well-resourced.

What to Watch:

- Enterprise reference customers (Q2–Q4 2026): Does any Fortune 500 publicly commit to production deployments on NVIDIA/T-Mobile’s platform?

- Interoperability announcements (6–12 months): Will NVIDIA and T-Mobile support open standards (MCP, A2A, ANS) or double down on proprietary stacks?

- Security incidents or compliance failures (12 months): Do edge AI deployments become targets for new classes of attacks or regulatory investigations?

- Competitive counter-moves (2026): Do AWS, Google, or Microsoft announce deeper telco integrations, or do they stick to cloud-edge hybrids?

Read the full press release on T-Mobile’s website.

Declaration of generative AI and AI-assisted technologies in the writing process: This content has been generated with the support of artificial intelligence technologies. Due to the fast pace of content creation and the continuous evolution of data and information, The Futurum Group and its analysts strive to ensure the accuracy and factual integrity of the information presented. However, the opinions and interpretations expressed in this content reflect those of the individual author/analyst. The Futurum Group makes no guarantees regarding the completeness, accuracy, or reliability of any information contained herein. Readers are encouraged to verify facts independently and consult relevant sources for further clarification.

Disclosure: Futurum is a research and advisory firm that engages or has engaged in research, analysis, and advisory services with many technology companies, including those mentioned in this article. The author does not hold any equity positions with any company mentioned in this article.

Analysis and opinions expressed herein are specific to the analyst individually and data and other information that might have been provided for validation, not those of Futurum as a whole.

Other Insights from Futurum:

T-Mobile Q4 FY 2025 Results Highlight Broadband and Digital Scale

Will T-Satellite Apps Redefine Off-Grid Connectivity for Everyone?