Analyst(s): Brendan Burke

Publication Date: April 13, 2026

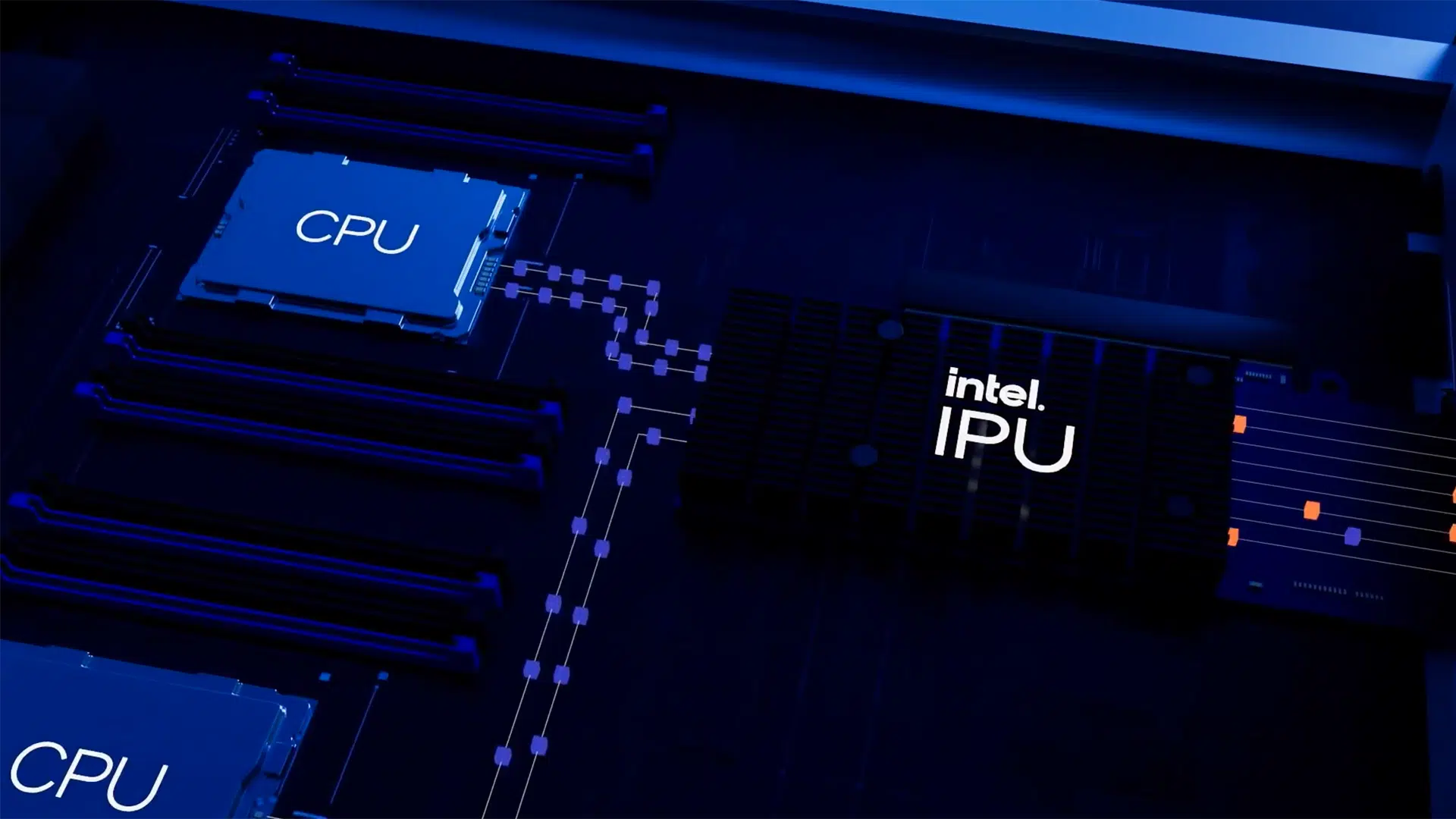

Intel and Google have announced a multiyear collaboration to advance AI infrastructure through next-generation Xeon CPUs and co-developed custom ASIC-based infrastructure processing units. The partnership signals a strategic reframing of the CPU and IPU as essential components of balanced, heterogeneous AI systems rather than secondary support for GPU accelerators.

What is Covered in This Article:

- Intel and Google’s multiyear AI infrastructure collaboration

- The expanding role of CPUs in inference-heavy AI pipelines

- Custom IPU co-development for hyperscale infrastructure offload

- Intel’s architectural narrative amid a GPU-dominated market

- Competitive implications for the data center semiconductor landscape

The News: Intel and Google announced on April 9, 2026, a multiyear collaboration to advance the next generation of AI and cloud infrastructure. The partnership centers on aligning across multiple generations of Intel Xeon processors to improve performance, energy efficiency, and total cost of ownership across Google’s global infrastructure, while expanding co-development of custom ASIC-based infrastructure processing units that offload networking, storage, and security functions from host CPUs. Google Cloud continues to deploy Intel Xeon processors across its workload-optimized instances, including Xeon 6 processors powering C4 and N4 instances for workloads spanning AI training coordination, latency-sensitive inference, and general-purpose computing.

“AI is reshaping how infrastructure is built and scaled,” said Lip-Bu Tan, CEO of Intel. “Scaling AI requires more than accelerators — it requires balanced systems. CPUs and IPUs are central to delivering the performance, efficiency, and flexibility modern AI workloads demand.”

Will Intel Xeon CPUs Increase Google’s CPU:XPU Ratio?

Analyst Take: The Intel-Google collaboration arrives at a moment when the AI infrastructure conversation is being forced to reckon with the limitations of an accelerator-only buildout strategy. For the past three years, data center investment narratives have been dominated by GPU procurement, with CPUs treated as commodity housekeeping components. Intel’s partnership with Google — and their accompanying white paper on the rising CPU-to-GPU ratio — represents a coordinated effort to challenge that framing by positioning CPUs and IPUs as first-class determinants of AI system throughput. Futurum Research’s own analysis has identified a CPU resurgence driven by agentic reasoning and reinforcement learning, with high-core-count server processors from Intel and AMD effectively sold out due to hyperscaler demand. The critical question is whether supply can keep up with demand, given the rapid inflection in CPU usage.

Inference Economics Demand More Than Accelerator Throughput

The collaboration’s emphasis on inference workloads reflects a structural shift that is now well-documented across the data center semiconductor market. Intel’s white paper notes that inference pipelines are dominated by CPU-bound operations — tokenization, batching, key-value cache management, routing, and response formatting — that bracket comparatively lightweight GPU forward passes. Google’s deployment of Xeon 6 processors across its C4 and N4 instances for latency-sensitive inference workloads validates this architectural reality in production. The white paper cites third-party estimates that inference will account for two-thirds of all AI compute in 2026, with the training-to-inference ratio inverting from 80/20 to 20/80 according to Lenovo’s CEO Yuanqing Yang at CES 2026. Futurum Research’s data center semiconductor analysis projects CPU demand growing at a 28.3% five-year compound annual growth rate — a figure that reflects inference-driven scaling rather than training expansion alone. Google’s Xeon commitment is not a legacy holdover but a deliberate architectural choice shaped by the economics of inference at hyperscale.

Custom IPUs Redefine the Infrastructure Offload Layer

The expansion of Intel-Google co-development on custom ASIC-based IPUs introduces a dimension of the collaboration that extends beyond the CPU narrative. IPUs are programmable accelerators that offload networking, storage, and security functions from host CPUs, improving utilization and enabling more predictable performance across hyperscale environments. By handling infrastructure tasks traditionally managed by CPUs, IPUs unlock greater effective compute capacity without increasing overall system complexity.

The co-development model also signals that Google sees value in purpose-built silicon designed to its specifications rather than relying solely on off-the-shelf SmartNICs or DPUs from competing vendors. Intel’s ability to execute on custom ASIC co-development at scale is a meaningful test of its foundry and advanced packaging ambitions under CEO Lip-Bu Tan’s restructuring. IPU co-development may prove to be the more strategically significant element of this partnership than the Xeon alignment alone, as it ties Google to Intel’s custom silicon capabilities across multiple hardware generations.

A Hyperscaler Endorsement at a Critical Moment for Intel

Google’s public commitment to Intel’s Xeon roadmap carries weight that extends beyond the technical merits of the collaboration. Intel has faced sustained competitive pressure from AMD’s EPYC processors in the data center CPU market and has struggled to establish meaningful share in the GPU accelerator segment against NVIDIA. A multiyear, multigenerational alignment with one of the world’s largest cloud providers offers Intel a credibility signal at a moment when its strategic direction under Lip-Bu Tan is still being evaluated by the market. Amin Vahdat, Google’s SVP and Chief Technologist for AI Infrastructure, described Intel as “a trusted partner for nearly two decades,” explicitly tying the relationship to roadmap confidence rather than legacy inertia.

Futurum’s analysis notes that Intel remains a leader in CPU solutions for data centers but lags behind NVIDIA in GPU adoption — a gap this partnership does not directly address. The implication is that the collaboration reinforces Intel’s CPU and custom silicon positioning but does not resolve the broader competitive question of whether Intel can participate in the accelerator economics that currently drive the majority of data center semiconductor revenue growth.

Heterogeneous System Design Becomes the Competitive Battleground

The framing of this collaboration around “balanced systems” and “heterogeneous AI infrastructure” reflects a broader architectural shift that is reshaping vendor strategies across the semiconductor industry. Intel’s white paper argues explicitly that future AI infrastructure planning must model CPU growth as a first-class driver of cost, performance, and energy-per-token economics — a claim that aligns with Futurum Research’s finding that agentic AI workloads are pushing CPU-to-XPU ratios back toward 1:1. Google’s architecture, which combines Xeon CPUs, custom IPUs, and its own Tensor Processing Units, exemplifies the heterogeneous model that Intel is advocating where no single component class dominates system design.

The risk for Intel is that heterogeneous architectures also create openings for ARM-based server processors, custom RISC-V designs, and cloud-provider-designed silicon that could displace x86 CPUs in specific workload tiers. The partnership’s emphasis on co-development and multigenerational alignment suggests both companies recognize that architectural lock-in, rather than component superiority, will determine long-term infrastructure partnerships. The competition for AI infrastructure is shifting from individual chip performance to system-level integration, and the Intel-Google collaboration is a bet that tightly coupled CPU-IPU platforms can win that system-level contest.

What to Watch:

- Whether Google’s Xeon deployment expands to new instance families beyond C4 and N4 as inference workloads scale through 2026 and 2027.

- The pace and scope of custom IPU co-development milestones, including whether Intel’s foundry capabilities can deliver on Google’s custom silicon timelines.

- Whether other hyperscalers follow Google’s lead in publicly committing to multigenerational CPU roadmaps or continue to diversify toward custom silicon alternatives.

- The degree to which enterprise architects adopt CPU-to-GPU ratio planning as a formal variable in AI infrastructure procurement decisions.

- How energy efficiency and total cost of ownership benchmarks for Xeon-IPU configurations compare with those of competing architectures in production environments.

See the full press release on Intel’s AI infrastructure collaboration with Google on the Intel website.

Disclosure: Futurum is a research and advisory firm that engages or has engaged in research, analysis, and advisory services with many technology companies, including those mentioned in this article. The author does not hold any equity positions with any company mentioned in this article.

Analysis and opinions expressed herein are specific to the analyst individually and data and other information that might have been provided for validation, not those of Futurum as a whole.

Other Insights from Futurum:

Can Intel Foundry’s Advanced Packaging Bring the Terafab Vision to the Stars?

Intel Bets on Agentic AI Economics with SambaNova Partnership

Intel Q4 FY 2025: AI PC Ramp Meets Supply Constraints

Source: Intel

Author Information

Brendan is Research Director, Semiconductors, Supply Chain, and Emerging Tech. He advises clients on strategic initiatives and leads the Futurum Semiconductors Practice. He is an experienced tech industry analyst who has guided tech leaders in identifying market opportunities spanning edge processors, generative AI applications, and hyperscale data centers.

Before joining Futurum, Brendan consulted with global AI leaders and served as a Senior Analyst in Emerging Technology Research at PitchBook. At PitchBook, he developed market intelligence tools for AI, highlighted by one of the industry’s most comprehensive AI semiconductor market landscapes encompassing both public and private companies. He has advised Fortune 100 tech giants, growth-stage innovators, global investors, and leading market research firms. Before PitchBook, he led research teams in tech investment banking and market research.

Brendan is based in Seattle, Washington. He has a Bachelor of Arts Degree from Amherst College.