Analyst(s): Fernando Montenegro, Mitch Ashley

Publication Date: April 3, 2026

What is Covered in This Article:

- The Scale of RSAC 2026: A look at the 35th annual conference, BSidesSF, the massive vendor landscape, and emerging community trends.

- The AI “Tragedy of the Commons”: How ubiquitous, confusing AI messaging is making it harder for sophisticated buyers to assess real value.

- The “Human in the Loop” Illusion: Why relying on human intervention is an unscalable band-aid that ignores the critical debate around AI reasoning capabilities.

- The Unsecured Protocol Layer: The looming, unaddressed risks surrounding Model Context Protocol (MCP) and agent-to-agent (A2A) communications.

- Securing the Agentic Landscape: A practical breakdown of the different agent types, their environments, and their unique security threats.

- AppSec Blind Spots: The lack of purpose-built vulnerability detection and provenance tracking for AI-generated code.

- What to Watch: Key indicators for the future of protocol-layer security, AppSec tooling, and behavioral evidence standards.

The Event — Major Themes & Vendor Moves: Now in its 35th year, the RSAC Conference remains the most important cybersecurity event of the year, bringing together professionals from across the industry. This year, RSAC attendance remained strong, bringing over 43,500 security professionals to the Moscone Center. Following its 2022 acquisition by a private equity consortium led by Crosspoint Capital Partners, the conference established itself as an independent, standalone business separate from RSA Security and continued to exert influence on the market, with a year-round content program and marketplace listings complementing the main conference. Earlier this year, RSAC announced that Jen Easterly, a well-known industry leader, had taken the helm as RSAC CEO.

Among the keynotes and hundreds of sessions, the RSAC Innovation Sandbox competition remains a familiar and appreciated part of the conference. This year, Geordie AI was crowned the 2026 “Most Innovative Startup” winner, securing the title with a purpose-built security and governance platform that aims to give enterprises real-time visibility into their AI agent footprints and continuously monitors agentic behavior.

It’s well-established that the RSAC Conference is large enough that, given the presence of so many industry participants, numerous other events take place around the main conference, benefiting from the “network effect”. The largest of these is the San Francisco edition of the popular BSides conference series. BSidesSF kicked off the week’s technical discussions the preceding weekend (March 21-22) at the nearby Metreon. Operating as a 100% volunteer-run, non-profit organization, BSidesSF offered a dynamic, community-driven environment focused on technical depth, education, and peer-reviewed research rather than vendor pitches. Embracing the fun “BSidesSF: The Musical!” theme for 2026, the event drew around 2,000 to 3,000 engineers and practitioners seeking honest, “in the trenches” conversations. Setting the tone for the week, both keynotes at BSidesSF featured conversations around AI.

Beyond these two major events, the schedule was rounded out by hundreds of off-site events, happy hours, and summits catering to investors, founders, practitioners, and marketers.

The RSAC Conference expo hall is the largest in the industry, and this year featured more than 600 exhibitors, ranging from the smallest startups in the Early Stage Expo area to numerous country pavilions to major vendors such as Microsoft, Google, Cisco, and many others. If we consider the presence of representatives from numerous smaller vendors attending the main conference and surrounding events, the number of vendors present around the conference is very likely to have exceeded 1,000.

RSAC 2026: The AI ‘Tragedy of the Commons’ and the Future of Agentic Security

Analyst — Take On the Conference Itself: The conference remains the most important event of the year, continuing to drive the community’s evolution by bringing together stakeholders from multiple backgrounds well beyond buyers and sellers. Given that the conference is in San Francisco, many venture investors are expected to participate, for example. However, it was both notable and regrettable that this year, many US government agencies chose to withdraw from the conference due to external factors.

Meanwhile, the vendor landscape remains as loud as ever. With over 600 sponsors on the floor, it is natural to try to attract attention. We observed the largest expo floor to date, filled with the usual antics. While last year we saw live animals, this year vendors brought large inflatables, a wrestling ring, mechanical horses, and more. While these flashy marketing stunts are par for the course, we also continue to see the troubling trend of glorifying threat actors. As the cybersecurity industry matures, we hope this particular tactic can eventually fade.

Worrying Confusion About AI and the “Tragedy of the Commons”

AI was omnipresent on the show floor, with roughly 30% to 40% of booths featuring prominent AI-related messaging. However, this ubiquity highlighted a growing problem, akin to the “Tragedy of the Commons” in economics, in which the collective suffers from the “rational” decisions of individual [economic] agents.

At RSAC, the rush by exhibitors to plaster “AI” and “agents” onto every surface has made gathering precise, actionable information incredibly difficult. Messaging frequently blurred the critical distinctions between “security for AI,” “AI for security,” and “security from AI” use cases. We understand that tying AI to a product feels necessary to stay current, but as buyers become more sophisticated, vendors need to step up and clarify their actual value propositions.

The “Human in the Loop” Illusion

Furthermore, the industry continues to treat the “human in the loop” (HITL) concept as a magic pill or perhaps a shibboleth meant to convey a deeper understanding of AI governance. Across sessions and the show floor, the “human in the loop” framing functioned more as reassurance than as a design specification. Vendors invoked it to signal safety without specifying what humans are actually reviewing, at what point in execution, and at what volume. At the scale AI and agentic systems operate, that ambiguity has real consequences: teams cannot review every AI/agent decision in real time, and most deployments lack the architectural capability to make agent behavior legible enough to govern it systematically.

The practical gap is that enterprises are deploying agents into consequential workflows without answering a foundational question: how do you know what an AI/agent actually did, and why? Audit trails and approval gates are necessary, but they address discrete actions rather than the full decision path. Until vendors define what behavioral evidence they generate and what governance mechanisms consume it, “human in the loop” remains a positioning statement rather than a scalable operational control.

Sidestepping the Reasoning Debate

Closely tied to the HITL dilemma is a widespread reluctance to debate the mechanics of reasoning—a critical factor when deploying agents. The cybersecurity industry seems far too comfortable accepting LLM-based foundational models as the default reasoning engines for agent workflows. This poses a potentially massive challenge for our space. Cybersecurity failures almost always happen on the margins, perfectly echoing Mark Twain: “It ain’t what you don’t know that gets you into trouble. It’s what you know for sure that just ain’t so.”

While there is significant ongoing research within the broader AI industry exploring advanced concepts such as neurosymbolic AI and world models, nuanced discussions of these topics were largely absent at the event. Unfortunately, most conversations around reasoning at RSAC simply reverted to “human in the loop,” “audit trails,” or similar after-the-fact band-aids. For security practitioners looking to deploy autonomous tools safely, paying attention to this gap in reasoning capabilities is essential.

The Protocol Layer Nobody Secured

Agent-to-resource communication protocols expanded rapidly through 2025 as engineering teams connected agents to internal data sources, APIs, and tools. That adoption outran security and compliance readiness, and, to us, RSAC largely missed the conversation.

Model Context Protocol creates a concrete attack surface: prompt injection through tool outputs, server-side request forgery at the agent-to-resource boundary, and authorization gaps where agents inherit credentials without scoped delegation. These are not theoretical concerns. They follow the same adoption pattern as containers, APIs, and open-source dependencies: organic uptake at engineering speed, with security reckoning once adoption is irreversible.

The near-absence of MCP and A2A protocol security as a distinct conversation at RSAC means the industry is running the same playbook again. Protocol-layer risk will become a procurement question. The vendors that move ahead of that conversation will be better positioned than those waiting for a breach to make it urgent. The Agentic AI Foundation, now operating under the Linux Foundation, represents the security community’s clearest opportunity to shape protocol-level governance standards before enterprise deployments force the issue.

Significant Confusion About Agents

Alongside the broader AI narrative, agents and agentic workflows were, as expected, top of mind on the show floor and in conversations with practitioners, founders, and investors alike. These agentic workflows offer tantalizing productivity gains across multiple areas of an enterprise, including cybersecurity operations.

However, our discussions across this spectrum of stakeholders revealed a glaring issue: many were eagerly discussing “agents” without ever actually defining what they meant by the term. To borrow a quote often attributed to George Bernard Shaw: “The single biggest problem in communication is the illusion that it has taken place.”

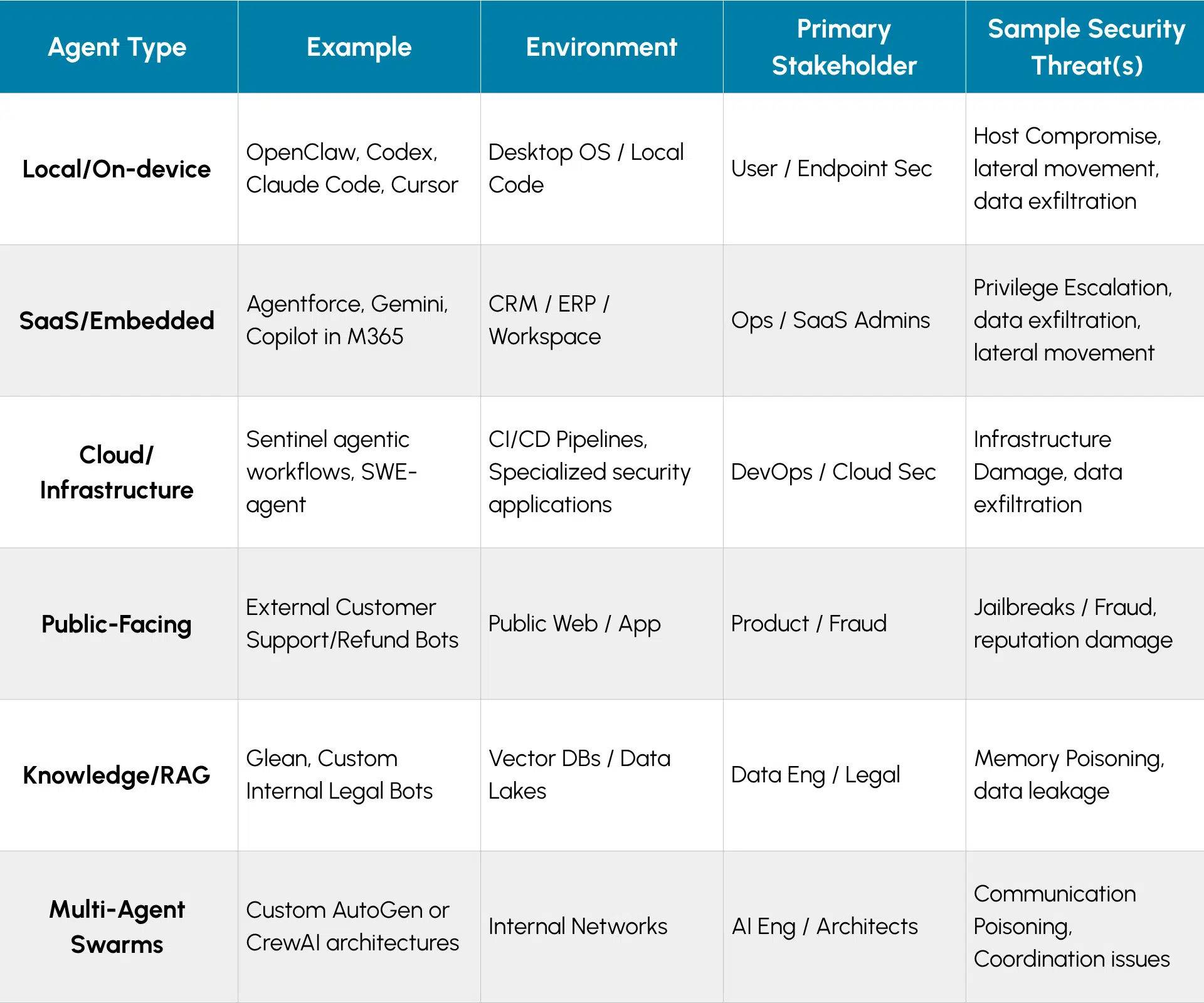

Where we see a significant risk is the disconnect in the industry’s approach to securing different types of agents. As the illustrative framework below demonstrates, the agentic landscape is incredibly varied. Each workflow type operates in a distinct environment, involves different primary stakeholders, and introduces a distinct, non-exhaustive set of specific security challenges.

Coming out of the conference, our core observation is that the industry must do better to incorporate this nuance in its handling of agent security. Every agent type outlined above presents unique security considerations, and we must arm practitioners with the precise knowledge, guidance, and tooling required to secure each variant—all while supporting the broader business objectives driving these deployments. Given the intense enterprise pressure to adopt AI rapidly, security teams simply do not have the luxury of trial and error when it comes to agentic security.

Moving forward, we expect significant guidance and native security capabilities to emerge directly within the agentic AI platforms themselves. This will be driven by major hyperscalers such as Google, Microsoft, and AWS, as well as enterprise SaaS providers such as Salesforce, ServiceNow, IBM, Oracle, and SAP.

To bridge the remaining gaps, we expect practitioners to lean heavily on their strategic security partners, such as Palo Alto Networks, CrowdStrike, Cisco, Fortinet, and Trend Micro, among many others, as well as on the growing ecosystem of specialized startups emerging to tackle this exact challenge. When dealing with startups, aligning their capabilities with specific agentic use cases will be critical, as many startups tend to focus on narrower use cases.

Securing AI Requires Starting at the Development Lifecycle

The dominant security frame at RSAC treated agents as runtime objects: things that execute, access systems, and create blast radius. That frame misses half the problem. The AI models, open source components, and dependencies used to build agents carry their own risk profile, and most of that risk enters the environment long before any agent executes a single action.

Several vendors at RSAC were repositioning around development-lifecycle security, and the moves clustered into two patterns. The first is embedding security intelligence upstream into coding workflows, including IDE, MCP, and CI/CD integration, extending scrutiny to AI models and OSS components as supply chain objects evaluated against provenance, integrity, and behavioral risk signals. The second is bringing observability-native transparency throughout the development pipeline rather than concentrating it at runtime: repositioning observability as an active control plane with AI-aware telemetry, and tying data pipeline health and lineage into the same fabric used for application and security monitoring.

The vendors that made these architectural moves before RSAC arrived with context that distinguished them from those still attaching AI positioning to existing product lines. The question the conference did not resolve is whether this recognition produces genuinely integrated architectures or another layer of point tools that enterprises will eventually have to rationalize.

AppSec for AI-Generated Code Has No Answer Yet

One area where agent use is more advanced is software engineering. AI-generated code is shipping at production scale. Vulnerability detection for agent-authored code, software supply chain provenance for AI-generated artifacts, and SBOM coverage for agent modifications all require tooling and process adaptations that most enterprise AppSec programs have not made.

The specific failure modes of AI-generated code, including hallucinated dependencies and unverified library calls, do not cleanly map onto the threat models that traditional static analysis tools were built to address. Vendors at RSAC largely folded this under generic AppSec messaging. That framing will not hold as agent code volumes scale, and regulators begin asking pointed questions about software provenance.

The security community’s contributions to AI open standards work are not yet commensurate with the risks those standards pose. The Agentic AI Foundation under the Linux Foundation is the right venue to change that. Practitioners who understand protocol-level attack surfaces, credential delegation, and authorization models are directly relevant to how MCP and A2A governance evolve. The alternative is security requirements defined primarily by the teams building the protocols, with hardening applied after adoption, making the architecture difficult to change.

What to Watch:

- Watch whether MCP and A2A protocol security surfaces as a distinct vendor category, or gets absorbed into SIEM, XDR, and AppSec platforms without dedicated treatment. The outcome reveals whether the industry treats protocol-layer agent risk as structurally distinct from application-layer risk, or repeats the pattern of bolting security onto already-deployed infrastructure.

- Watch whether AppSec vendors develop purpose-built solutions for AI-generated code, including provenance tracking and hallucination-detection for dependencies. Applying traditional static analysis to fundamentally different code-generation patterns will create growing blind spots as agent code volumes scale.

- Watch for the emergence of “Shadow Agent” discovery as a critical capability. As business units rapidly deploy autonomous workflows within their SaaS applications (like CRM or ERP environments), enterprise security teams will urgently need tools to discover, inventory, and assess ungoverned agentic activity before it leads to data exposure.

- Watch whether “human in the loop” evolves from a positioning claim into a specified design requirement. Enterprises that begin demanding concrete behavioral evidence standards from vendors will force the conversation from reassurance into architecture.

- Watch how compliance frameworks and regulators adapt to autonomous decision-making. As the volume of AI-driven actions scales, auditors will inevitably shift their focus from static security controls to demanding continuous, verifiable proof of agent governance and boundary enforcement.

The RSAC Conference’s closing press release is available here.

Disclosure: Futurum is a research and advisory firm that engages or has engaged in research, analysis, and advisory services with many technology companies, including those mentioned in this article. The author does not hold any equity positions with any company mentioned in this article.

Analysis and opinions expressed herein are specific to the analyst individually and data and other information that might have been provided for validation, not those of Futurum as a whole.

Other Insights from Futurum:

Are We Clear On What We Mean When We Say “AI Security”? – Report Summary

Do AI Factories Signal a New Mandate for Certified Security? – Report Summary

RSAC Conference 2025 – Evolution, Change, and Yes, Some AI