Analyst(s): Brendan Burke

Publication Date: March 30, 2026

Samsung and AMD have expanded their partnership to align on next-generation AI memory and computing technologies, centred on HBM4 and DDR5 solutions. The collaboration targets integrated AI systems and reflects growing pressure on memory bandwidth and system-level performance.

What is Covered in This Article:

- Samsung and AMD signed an MoU to expand collaboration on AI memory and computing technologies, including HBM4 and DDR5.

- Samsung is expected to supply HBM4 for AMD’s Instinct MI455X GPUs and DDR5 for 6th Gen EPYC Venice CPUs.

- The partnership supports integrated AI systems that combine GPUs, CPUs, and rack-scale architectures, such as the Helios platform.

- The agreement includes discussions around potential foundry services and deeper semiconductor manufacturing collaboration.

- The development reflects the increasing importance of memory bandwidth and efficiency in AI infrastructure performance.

The News: Samsung Electronics and AMD have signed a memorandum of understanding to expand their collaboration on next-generation AI memory and computing technologies. The agreement focuses on aligning Samsung’s HBM4 memory supply with AMD’s upcoming Instinct MI455X GPUs, alongside DDR5 memory solutions for 6th Gen EPYC processors, codenamed “Venice,” supporting systems built on the AMD Helios platform.

The collaboration targets integrated AI infrastructure combining GPUs, CPUs, and rack-scale architectures, with both companies emphasising the importance of memory bandwidth and power efficiency for system-level performance. The agreement also includes discussions on potential foundry services, with Samsung positioning itself as a key supplier of HBM4 while exploring broader engagement in AMD’s semiconductor ecosystem.

Can AMD Strengthen Both Logic and Memory Supply Chains With Samsung?

Analyst Take: Samsung and AMD’s expanded collaboration on AI memory infrastructure signals a deeper alignment across the computing stack, centered on HBM4 supply, DDR5 development, and integration into AMD’s Instinct, EPYC, and Helios platforms. The agreement builds on nearly two decades of collaboration and positions Samsung as a primary supplier for next-generation AI accelerators. The focus on memory bandwidth, efficiency, and system-level integration reflects increasing constraints in scaling AI workloads. The inclusion of potential foundry collaboration further extends the scope beyond memory into logic fabrication. This development deepens AMD’s strategic advantage in on-package memory integration as laid out in Futurum’s Signal Report on AI Accelerators.

Memory Bandwidth as a System-Level Constraint

Both companies explicitly highlight memory bandwidth and power efficiency as critical constraints in AI system performance, particularly for training and inference workloads. Samsung’s HBM4, built on a 6th-generation 10nm-class DRAM process with a 4nm logic base die, delivers up to 13 Gbps speed and 3.3 TB/s bandwidth, positioning it as a key enabler for next-generation systems. This focus indicates that performance gains are increasingly dependent on memory advancements rather than compute alone. The integration of HBM4 into AMD’s MI455X GPU is positioned to address these system-level bottlenecks. As a result, memory architecture is emerging as a defining factor in AI infrastructure scalability.

Increasing Integration Across the AI Stack

The agreement aligns memory, compute, and system architecture through combined deployment of Instinct GPUs, EPYC CPUs, and Helios rack-scale platforms. This reflects a shift toward tightly integrated AI systems rather than discrete component optimization. Samsung’s role spans memory supply, advanced packaging, and potential foundry services, indicating a broader contribution to system design. AMD’s emphasis on integration “from silicon to system to rack” underscores the need for coordination across all layers of infrastructure. This suggests that future AI performance improvements will increasingly depend on cross-layer optimization rather than isolated hardware upgrades.

Samsung’s Positioning in the AI Supply Chain

Samsung is positioning itself as a key HBM4 supplier for AMD’s next-generation AI GPUs, building on its existing role supplying HBM3E for MI350X and MI355X accelerators. The company holds approximately 22% of the global HBM market compared to 57% for SK Hynix, highlighting the competitive landscape in advanced memory. By aligning closely with AMD’s upcoming platforms, Samsung strengthens its presence in a segment experiencing tight supply and rising demand. The potential expansion into foundry services introduces an additional dimension to its role in the semiconductor value chain. This indicates a broader effort to expand beyond memory supply into more integrated participation in AI infrastructure development.

Foundry Collaboration and Supply Chain Implications

The agreement includes discussions around Samsung providing foundry services for next-generation AMD products, signaling a possible shift in manufacturing relationships. Samsung may seek to leverage its HBM relationship to gain a role in logic manufacturing, potentially altering supply chain dynamics. The timing of the agreement, alongside industry focus on securing advanced memory supply, reflects intensifying competition across semiconductor partnerships. If realized, foundry collaboration would extend Samsung’s influence from memory into broader accelerator production, reshaping its role in the AI hardware ecosystem.

What to Watch:

- The extent to which Samsung transitions from an HBM supplier to a broader accelerator partner through foundry engagement.

- How memory bandwidth and power efficiency continue to shape AI system design priorities.

- Competitive positioning in the HBM market, particularly relative to SK Hynix’s leading share.

- Execution of integrated AI systems combining GPUs, CPUs, and rack-scale architectures such as Helios.

- Impact of long-term supply agreements as AI-driven demand tightens availability of advanced memory.

See the complete press release on Samsung’s website about the collaboration between Samsung and AMD on next-generation AI memory solutions.

Declaration of generative AI and AI-assisted technologies in the writing process: This content has been generated with the support of artificial intelligence technologies. Due to the fast pace of content creation and the continuous evolution of data and information, The Futurum Group and its analysts strive to ensure the accuracy and factual integrity of the information presented. However, the opinions and interpretations expressed in this content reflect those of the individual author/analyst. The Futurum Group makes no guarantees regarding the completeness, accuracy, or reliability of any information contained herein. Readers are encouraged to verify facts independently and consult relevant sources for further clarification.

Disclosure: Futurum is a research and advisory firm that engages or has engaged in research, analysis, and advisory services with many technology companies, including those mentioned in this article. The author does not hold any equity positions with any company mentioned in this article.

Analysis and opinions expressed herein are specific to the analyst individually and data and other information that might have been provided for validation, not those of Futurum as a whole.

Other Insights from Futurum:

Will Meta’s Customization of AMD GPUs Empower Personal Agents?

Is Tesla’s Multi-Foundry Strategy the Blueprint for Record AI Chip Volumes?

AMD Q4 FY 2025: Record Data Center And Client Momentum

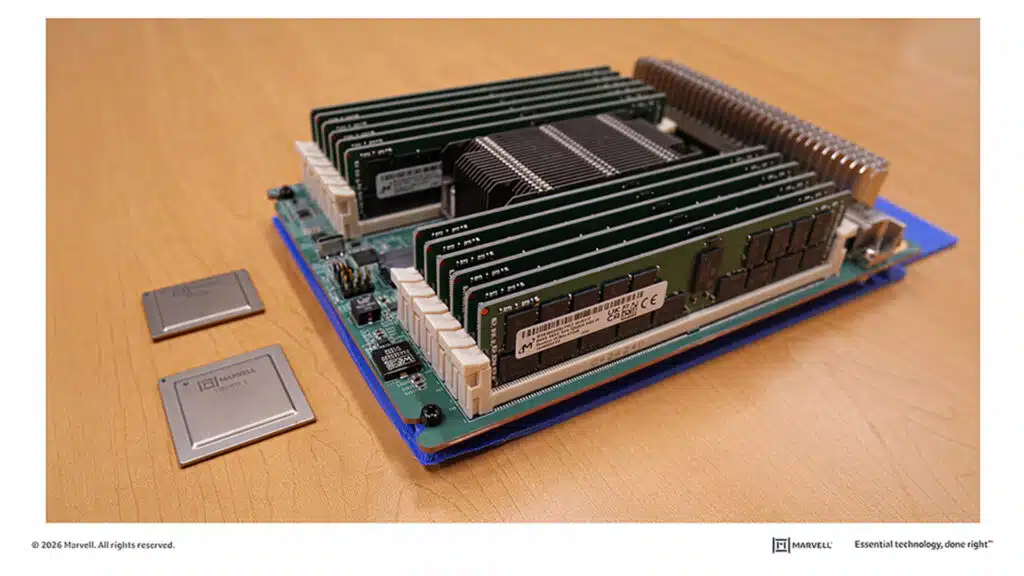

Image Credit: Samsung

Author Information

Brendan is Research Director, Semiconductors, Supply Chain, and Emerging Tech. He advises clients on strategic initiatives and leads the Futurum Semiconductors Practice. He is an experienced tech industry analyst who has guided tech leaders in identifying market opportunities spanning edge processors, generative AI applications, and hyperscale data centers.

Before joining Futurum, Brendan consulted with global AI leaders and served as a Senior Analyst in Emerging Technology Research at PitchBook. At PitchBook, he developed market intelligence tools for AI, highlighted by one of the industry’s most comprehensive AI semiconductor market landscapes encompassing both public and private companies. He has advised Fortune 100 tech giants, growth-stage innovators, global investors, and leading market research firms. Before PitchBook, he led research teams in tech investment banking and market research.

Brendan is based in Seattle, Washington. He has a Bachelor of Arts Degree from Amherst College.