Analyst(s): Brendan Burke

Publication Date: March 26, 2026

Arm announced the AGI CPU, its first proprietary production silicon, built on the Neoverse V3 platform and designed to power agentic AI orchestration at rack scale. The move transforms Arm from a pure intellectual property licensor into a direct silicon competitor in the data center CPU market, fundamentally altering the company’s revenue model and the competitive dynamics of AI infrastructure.

What is Covered in This Article:

- Arm’s launch of the AGI CPU and its co-development with Meta

- The business model shift from IP royalties to direct silicon revenue

- How agentic workloads are restructuring CPU demand in AI data centers

- Synopsys, Micron, and Marvell ecosystem alignment with AGI CPU specifications

- Competitive positioning against NVIDIA Vera, AMD EPYC Venice, and Intel Clearwater Forest

The News: Arm announced the AGI CPU on March 24, 2026, marking the first time in the company’s more than 35-year history that it has delivered its own production silicon. Built on the Arm Neoverse V3 platform, the chip packs 136 cores into a 300-watt envelope and is designed for agentic AI orchestration, accelerator management, and dense rack-scale deployment. Arm’s reference configuration supports up to 8,160 cores in a standard air-cooled 36-kilowatt rack, with a Supermicro liquid-cooled design housing over 45,000 cores across 336 chips in a 200-kilowatt enclosure.

Meta is the lead co-development partner and customer, working alongside Arm to optimize gigawatt-scale infrastructure for its family of applications and custom MTIA accelerators. Additional launch partners include Cerebras, Cloudflare, F5, OpenAI, Positron, Rebellions, SAP, and SK Telecom, with commercial systems available for order from ASRock Rack, Lenovo, and Supermicro. More than 50 companies across hyperscale, cloud, silicon, memory, networking, software, and system design are supporting the expansion.

“OpenAI runs AI systems at massive scale,” said Sachin Katti, Head of Industrial Compute at OpenAI. “The Arm AGI CPU will play an important role in our infrastructure as we scale, strengthening the orchestration layer that coordinates large-scale AI workloads and improving efficiency, performance, and bandwidth across the system.”

Arm’s $15 Billion CPU Opportunity Hinges on Agentic Data Center Design

Analyst Take: The Arm AGI CPU redefines Arm’s position in the semiconductor value chain, arriving at a moment when agentic AI workloads are restoring the CPU to strategic prominence in data center architecture. For more than three decades, Arm generated revenue by licensing intellectual property and collecting per-unit royalties, a model that produced pennies per chip even as Arm-based designs proliferated across hyperscale platforms such as AWS Graviton, Google Axion, and Microsoft Azure Cobalt.

By shipping finished silicon, Arm now captures dollars per chip in direct margin on top of the royalties it already collects from every licensee. This dual-revenue model addresses a data center CPU market that Futurum projects will reach $76.6 billion by 2029, with growth accelerating to 34.9%, outpacing both GPUs and XPU. In a supply-constrained environment, Arm can benefit from new capacity coming online from TSMC and from industry relationships that can help it gain market share. Management’s target of $15 billion in revenue by FY 2031 can redefine the company as an AI data center innovator and serve as a platform for successive silicon design in the company’s historical core market of Physical AI.

Agentic Workloads Restore the CPU to the Center of AI Infrastructure

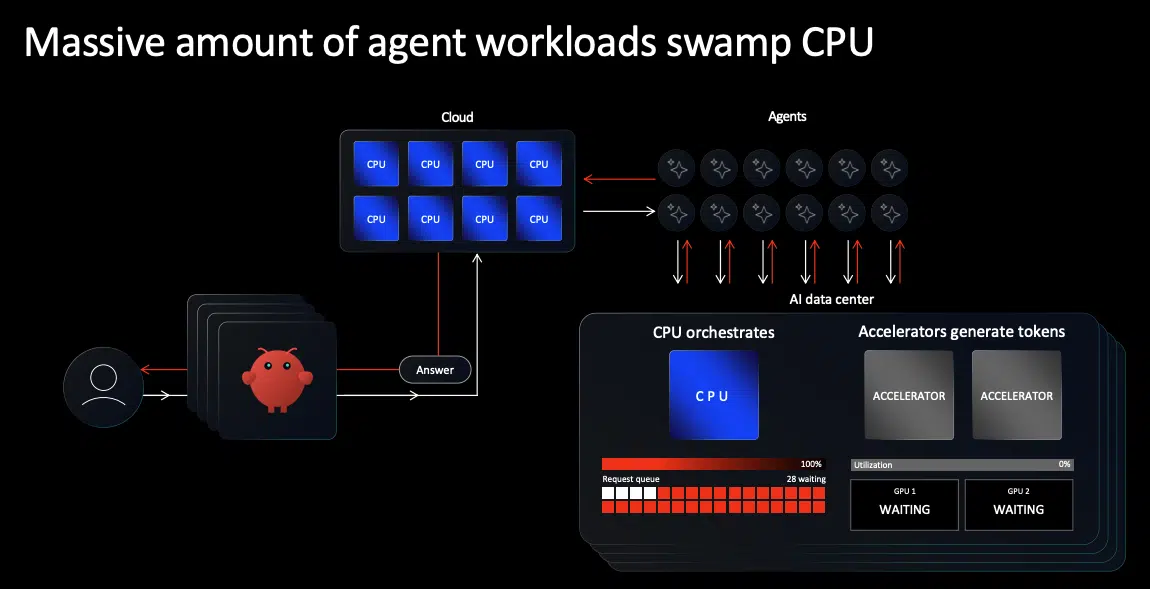

The timing of the AGI CPU reflects a structural shift in how AI systems consume compute. Training-era architectures concentrated investment in GPUs, treating the CPU as a housekeeping component, with one CPU for every two or four accelerators. Agentic AI has inverted that assumption. When software agents orchestrate sub-agents, call external tools, query databases, and wait for human approvals, the GPU sits allocated but idle while the CPU handles the active work. Research from Georgia Tech and Intel has shown that CPU-side tool processing accounts for up to 90.6% of total latency in representative agentic workloads, and Anyscale has demonstrated an 8x reduction in GPU requirements by disaggregating CPU- and GPU-intensive pipeline stages.

Futurum’s analysis projected that CPU-to-GPU ratios in AI clusters would climb back toward 1:1, as multi-agent in our CPU demand report published last month. Arm views agentic data centers increasing CPU content by 4x to 120 million cores per GW. Such an increase, with the 36 kW estimated power draw for Arm AGI CPU servers, would bring the ratio of CPU to GPU servers closer to 7:1, allocating a majority of data center power to CPUs rather than GPUs. This inversion may relate to the unique use cases of Meta’s recommendation systems, for which the company has already agreed to invest in next-generation AMD and NVIDIA CPUs.

This bold vision for agentic data centers relies on three use cases for CPUs, as outlined by Arm Executive Vice President Mohamed Awad: CPUs as head nodes, standalone racks, and AI factory-level orchestrators. Futurum also laid out multiple use cases in our report for agentic inference and reinforcement learning sandboxes. Even so, factory-level CPU orchestration would diverge from the GPU-centric model of AI factories to date. Arm’s decision to purpose-build the AGI CPU around sustained, massively parallel agentic throughput rather than peak single-thread performance reflects a deliberate architectural bet that the CPU orchestration layer, not the accelerator, will be the binding constraint on next-generation AI infrastructure.

The Dual Revenue Model Creates an Asymmetric Competitive Position

Arm occupies a position in the data center CPU market that no other company can replicate. AWS Graviton, Google Axion, Microsoft Azure Cobalt, and NVIDIA Vera are all built on Arm architecture, generating royalty income for Arm regardless of which platform wins a given workload. The AGI CPU adds a direct silicon revenue stream on top of that royalty base, meaning Arm is compensated whether it wins the socket directly or its licensees do. This dual-monetization structure insulates Arm from the zero-sum dynamics that define competition among Intel, AMD, and NVIDIA in the server market.

It also introduces a tension that Arm must manage carefully: licensees who have invested billions in custom Arm-based designs may view a first-party chip as competitive encroachment rather than ecosystem expansion. Customers may pressure Arm to avoid selling to cloud competitors, limiting the serviceable market to neoclouds like OpenAI’s Stargate. Arm’s framing of the AGI CPU as targeting the standalone orchestration layer, distinct from the tightly coupled GPU-CPU pairings served by NVIDIA and cloud applications by hyperscalers, is designed to minimize that friction. Whether the serviceable market proves large enough for co-expansion rather than share displacement will depend on the specialization of agentic data centers and enterprise demand for AI-targeted CPU servers.

EDA Partnerships and the Component Ecosystem Validate Production Readiness

Arm’s transition from IP licensor to silicon vendor demanded a supply chain and electronic design automation (EDA) ecosystem willing to co-invest in a first-generation product with no production track record. Synopsys played a central role in that enablement, announcing that Arm used its full-stack design portfolio to develop and validate the AGI CPU on TSMC’s advanced 3nm node. This collaboration extended to silicon-proven interface IP and hardware-assisted verification (HAV), enabling pre-silicon software confidence and system-scale validation. Cadence also supported the launch, building on a long history of Arm SoC verification. The result is not only a chip design but also a rack-scale system design that leverages Arm’s energy-efficiency advantage. The collaboration shows the depth of resources available from EDA leaders to empower custom silicon teams that have not historically succeeded in microarchitecture design.

Beyond design tooling, the AGI CPU’s 96 lanes of PCIe Gen6 and native CXL 3.0 support align directly with the product roadmaps of key launch partners such as Micron and Marvell. Micron’s 60TB PCIe Gen6 solid-state drive (SSD) is purpose-built for the bandwidth and latency profile that the AGI CPU demands. Marvell’s recently announced Structera S 30260 260-lane CXL switch device with CXL 3.0 support can create a coherent memory and interconnect fabric for agentic workloads. The breadth of this ecosystem, spanning electronic design automation (EDA), memory, networking, and system integration, suggests that the AGI CPU is not a reference design or a technology demonstration, but a production-grade platform with validated supply chain commitments.

Competition Will Compress Margins and Accelerate Innovation

The server CPU market has not been this broadly contested in two decades, when Sun Microsystems still had a significant market share. NVIDIA launched the Vera CPU at GTC, featuring 88 custom Olympus cores for agentic orchestration. AMD’s EPYC Venice brings 256 Zen 6 cores on TSMC 2nm with a claimed 70% generational performance leap. Intel’s Clearwater Forest packs 288 E-cores on its 18A process, with Diamond Rapids P-core chips also scheduled for 2026. Qualcomm is re-entering the server market with a rack designed to plug into NVIDIA’s NVLink Fusion architecture. Among hyperscalers, AWS Graviton has proven successful as the first Arm data center CPU, serving nearly 100,000 cloud customers and driving over half of AWS CPU demand.

Arm’s competitive differentiation rests on three claims: roughly double the core density of a standard x86 1U server, per-core bandwidth tuned for thousands of always-on AI agents, and full software compatibility with the existing Neoverse ecosystem that already runs across AWS, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure. The OCP-standard rack form factor and existing Neoverse software stack lower adoption barriers for operators who have already validated Arm workloads in production. However, NVIDIA’s Vera benefits from tight integration with Blackwell GPUs via NVLink Fusion, while AMD’s Turin and Venice chips are already entrenched at Meta, Microsoft, and other hyperscalers. The AGI CPU must prove that standalone CPU-dense orchestration racks are a large enough category to sustain a premium-priced, first-party Arm product alongside cheaper Arm licensee alternatives and deeply entrenched x86 incumbents.

Performance Claims Require Production Validation Before Reshaping Procurement

Arm claims more than 2x performance per rack compared with the latest x86 systems, a figure attributed to higher effective memory bandwidth, superior per-thread performance from Neoverse V3 cores, and denser blade configurations. The third-party analysis accompanying the launch estimates up to $10 billion in capital expenditure savings per gigawatt of AI data center capacity, a figure that, if validated, would elevate the procurement conversation from a technical evaluation to a generational system upgrade.

However, these claims carry the asterisk that they are based on Arm’s internal estimates comparing fully populated rack configurations with comparable x86 setups using industry-standard workloads. The broader x86 software ecosystem, with decades of enterprise application optimization, middleware tuning, and compatibility with platforms such as VMware and Red Hat, carries switching costs for existing workloads. The proof will emerge over the next 12 to 18 months as Meta, OpenAI, and the broader launch partner base move from announced commitments to measured production telemetry. Until that data is public, the 2x claim remains a thesis rather than a procurement mandate.

What to Watch:

- Wafer supply: The AGI CPU’s reliance on TSMC’s 3nm capacity introduces fabrication risk at a moment when every major AI chip vendor is competing for the same advanced node allocation, and any supply constraint could delay Arm’s volume ramp relative to AMD Venice and NVIDIA Vera. Watch for volume production in H2 2027.

- Licensee reaction: if AWS, Google, or Microsoft publicly endorse the AGI CPU alongside their own Graviton, Axion, and Cobalt roadmaps, it validates Arm’s co-expansion thesis; silence or competitive counter-positioning would suggest the channel conflict risk is materializing.

- Performance testing: The AGI CPU’s 2x rack-level performance claim is based on Arm internal estimates, and the broader x86 software ecosystem carries decades of enterprise application optimization, middleware tuning, and platform compatibility that Arm-native deployments must overcome to win workloads beyond greenfield AI infrastructure.

- Memory ecosystem trends: CXL 3.0 ecosystem maturity will gate the AGI CPU’s disaggregated memory value proposition.

- Agentic co-design: Agentic testing on Arm systems can prove the ability to offload high-value processes at high concurrency. Look for Meta to prove the cost savings from this partnership.

See the complete press release on the Arm AGI CPU launch on the company’s website, and more details in the Arm AGI CPU platform brief on the Arm Community blog.

Disclosure: Futurum is a research and advisory firm that engages or has engaged in research, analysis, and advisory services with many technology companies, including those mentioned in this article. The author does not hold any equity positions with any company mentioned in this article.

Analysis and opinions expressed herein are specific to the analyst individually and data and other information that might have been provided for validation, not those of Futurum as a whole.

Other Insights from Futurum:

Will NVIDIA’s Meta Deal Ignite a CPU Supercycle?

Arm at the Center of the AI & Data Center Revolution

Arm Q3 FY 2026 Earnings Highlight AI-Driven Royalty Momentum

Image Credit: Arm

Author Information

Brendan is Research Director, Semiconductors, Supply Chain, and Emerging Tech. He advises clients on strategic initiatives and leads the Futurum Semiconductors Practice. He is an experienced tech industry analyst who has guided tech leaders in identifying market opportunities spanning edge processors, generative AI applications, and hyperscale data centers.

Before joining Futurum, Brendan consulted with global AI leaders and served as a Senior Analyst in Emerging Technology Research at PitchBook. At PitchBook, he developed market intelligence tools for AI, highlighted by one of the industry’s most comprehensive AI semiconductor market landscapes encompassing both public and private companies. He has advised Fortune 100 tech giants, growth-stage innovators, global investors, and leading market research firms. Before PitchBook, he led research teams in tech investment banking and market research.

Brendan is based in Seattle, Washington. He has a Bachelor of Arts Degree from Amherst College.