Analyst(s): Brendan Burke

Publication Date: January 22, 2026

Tesla is moving aggressively to leverage foundry diversification, tapping both Samsung and TSMC to manufacture its AI5 chip and set new records for AI silicon volumes while slashing power use and costs. If successful, this dual-foundry strategy could enable record production volumes, state-of-the-art performance per watt for AI inference, and unprecedented control over the silicon roadmap underpinning autonomous vehicles, robotics, and space-based data centers.

What is Covered in this Article:

- Tesla’s multi-foundry fabrication strategy for AI5 chips using both Samsung and TSMC

- Performance leap: Elon claims that AI5 will match Nvidia GPUs with a fraction of the power draw

- How advanced electronic design automation (EDA) tools could accelerate chip development beyond industry norms

- Implications for autonomous vehicles, robotics, and AI supercomputing

- How rapid design cycles and foundry diversity could redefine the AI silicon market

The News: Tesla is architecting its AI5 program explicitly for record-scale AI chip volumes, announcing that the processor will be manufactured in parallel across Samsung’s and TSMC’s US-based fabs. The dual-foundry approach is scheduled for initial builds in 2026 and a major volume ramp in 2027. CEO Elon Musk has indicated that AI5 is approaching design lock with AI6 already underway, targeting roughly nine-month generational cycles. This high-volume strategy underpins a broader roadmap extending beyond vehicles to humanoid robotics and space-based AI systems.

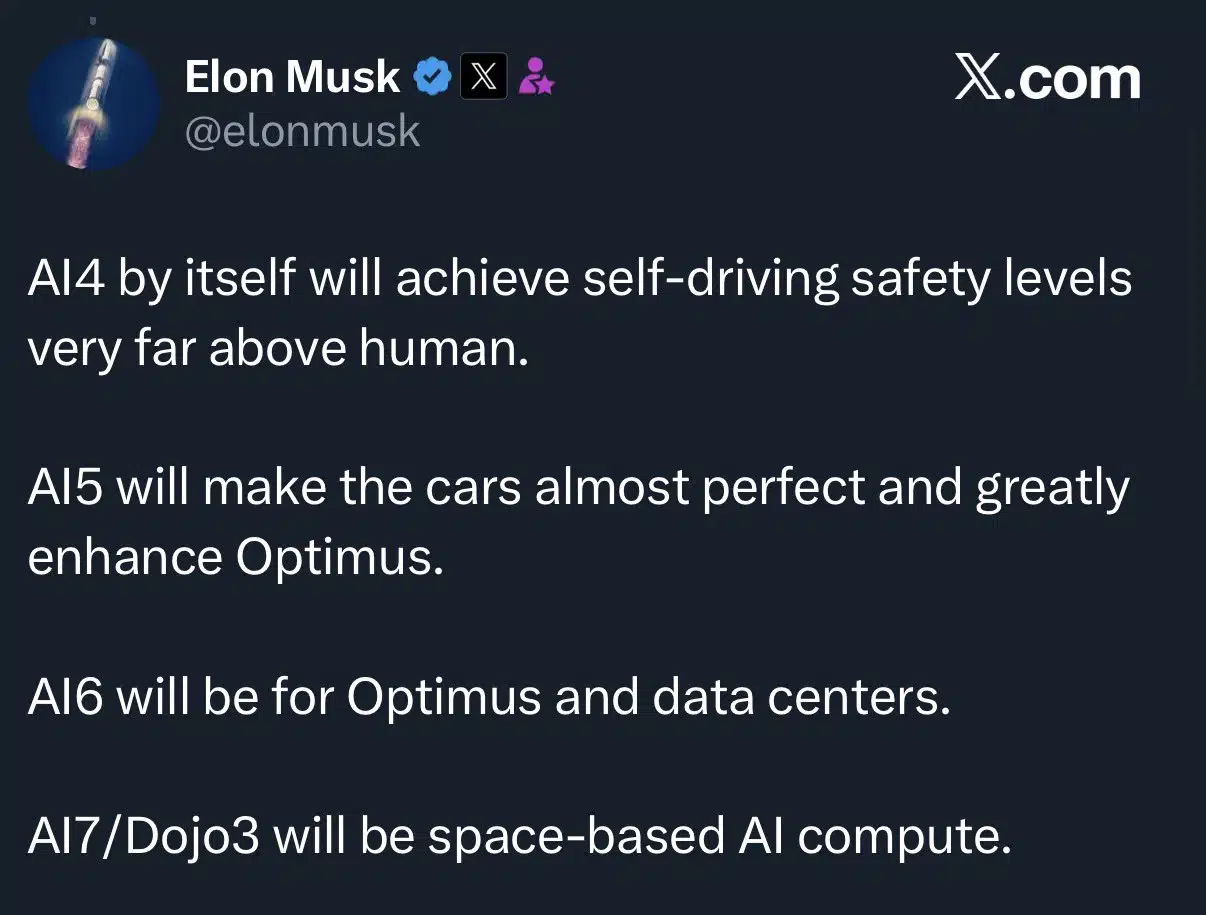

See Elon Musk’s prediction about Tesla’s AI5 volume on Musk’s X account.

Is Tesla’s Multi-Foundry Strategy the Blueprint for Record AI Chip Volumes?

Analyst Take: Tesla’s Foundry Diversification, A Contrarian Playbook for AI Chip Dominance — Tesla’s decision to produce the AI5 chip through both Samsung and TSMC marks a fundamental shift in how technology leaders can approach AI silicon, breaking from the single-vendor, slow-cadence model that has long defined the chip industry.

Breaking the Supply Chain Bottleneck

By splitting production between two foundries, Tesla can hedge against geopolitical risk while accelerating its design-to-silicon velocity. Samsung’s Taylor plant brings U.S.-based, advanced-node capacity online, while TSMC’s proven 3nm platform gives Tesla optionality and leverage. This results in rapid iteration cycles as short as nine months per revision, greater resilience to demand spikes, and a path to record chip volumes to feed both automotive, data center, and robotic ambitions.

A silent enabler of Tesla’s 9-month design cycle is its partnership with electronic design automation (EDA) leader Synopsys. By using Synopsys tools for design compilation, debugging, and simulation, Tesla can automate the verification of new designs. The integration of AI with these tools has begun to demonstrably reduce manual effort and accelerate typical chip design tasks from days to hours or even minutes. Our review of Tesla hiring signals suggests that Synopsys usage is a key enabler of its engineering team.

Performance, Power, and the Real Tesla Moat

AI5 represents a milestone in a broader multi-processor roadmap rather than a standalone chip. Tesla is aligning successive silicon generations around improving performance-per-watt across in-vehicle autonomy, dedicated robotics processors for humanoid systems, and high-throughput training silicon for Dojo3 for space-based data centers.

Across successive generations of autonomy hardware, Tesla has prioritized deployable performance per watt over peak benchmarks, steadily lowering cost per inference at fleet scale. Public disclosures around Optimus and Dojo3 further confirm that Tesla is extending this model into specialized processors, allowing efficiency gains to compound across form factors rather than reset each generation.

The Market Impact: Will Others Follow?

Tesla is signaling that foundry diversity and tight internal chip design cycles can lead to AI silicon leadership. Traditional AI chip vendors, who often rely on a single foundry and annual refresh cycles, may find themselves vulnerable. Tesla’s model could spur hyperscalers and legacy auto OEMs to reconsider their own supply chains and innovation rhythms, especially as AI demand accelerates.

What to Watch:

- Yield curves at Samsung’s Taylor facility: Samsung’s Taylor plant is a testbed for 2nm GAA technology. If Samsung can reach profitable yields in 2026, Tesla gains a massive cost advantage over competitors stuck on more expensive single-source nodes.

- The TeraFab and Volume Dominance Musk claims Tesla will produce the highest volume of AI chips in the market. Watch for the construction of dedicated TeraFabs or exclusive lines at Samsung Taylor. High-volume silicon is the key to making Optimus economically viable.

- Supply Chain Resilience vs. Complexity: While multi-foundry sourcing reduces risk, it increases engineering complexity. Watch for whether Synopsys’s generative AI tools can actually compress the verification phase.

In the absence of detailed Tesla disclosures, Musk’s public commentary on X has become a primary source of information on AI5.

Disclosure: Futurum is a research and advisory firm that engages or has engaged in research, analysis, and advisory services with many technology companies, including those mentioned in this article. The author does not hold any equity positions with any company mentioned in this article.

Analysis and opinions expressed herein are specific to the analyst individually and data and other information that might have been provided for validation, not those of Futurum as a whole.

Other insights from Futurum:

America’s Bet on Intel: Why This Investment Is Existential for National Security and Tech Leadership

Image Credit: Google Gemini

Author Information

Brendan is Research Director, Semiconductors, Supply Chain, and Emerging Tech. He advises clients on strategic initiatives and leads the Futurum Semiconductors Practice. He is an experienced tech industry analyst who has guided tech leaders in identifying market opportunities spanning edge processors, generative AI applications, and hyperscale data centers.

Before joining Futurum, Brendan consulted with global AI leaders and served as a Senior Analyst in Emerging Technology Research at PitchBook. At PitchBook, he developed market intelligence tools for AI, highlighted by one of the industry’s most comprehensive AI semiconductor market landscapes encompassing both public and private companies. He has advised Fortune 100 tech giants, growth-stage innovators, global investors, and leading market research firms. Before PitchBook, he led research teams in tech investment banking and market research.

Brendan is based in Seattle, Washington. He has a Bachelor of Arts Degree from Amherst College.