Analyst(s): Mitch Ashley

Publication Date: February 25, 2026

AI agents operating at machine speed have outpaced the governance capacity of traditional observability practices. Futurum Research introduces observability-native as a fundamental architectural shift, defining seven principles that embed AI behavior visibility as a first-class design principle throughout the software development lifecycle. Organizations that defer this architecture will cap agent autonomy at low-risk use cases while competitors build the governance infrastructure to deploy agents at scale.

Key Points:

- Observability-native redefines how enterprises govern AI agents by treating intent, reasoning, constraints, and outcomes as structured, first-class telemetry rather than infrastructure side effects.

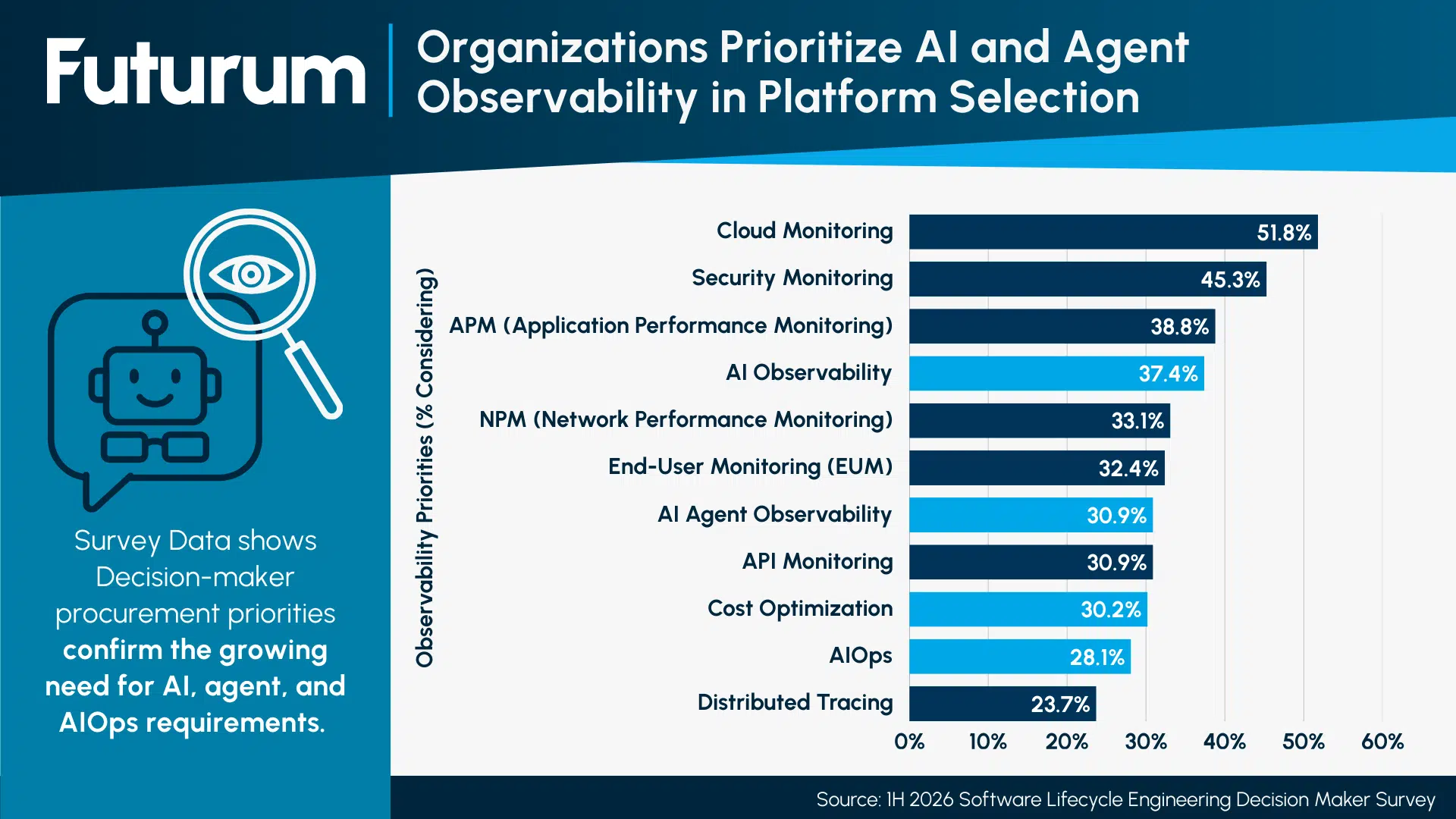

- Futurum Research’s January 2026 Software Lifecycle Engineering Decision-Maker Study shows AI observability and AI agent observability rank fourth and sixth among enterprise procurement priorities.

- Enterprises will grant AI agents autonomy only to the degree they can observe and control agent behavior in real time, making observability-native architecture a non-negotiable prerequisite for production-scale agent deployment.

Overview:

Traditional observability was designed for human-driven operations. AI agents invalidate those assumptions. Agents plan, generate, test, deploy, and modify software in continuous loops spanning seconds to hours at a velocity no human review process can match. The constraint that once enabled observability and human-speed inference becomes its central liability.

Observability-Native as the Go-Forward Foundation

Observability-native generates structured, explainable signals directly from AI workflows and control planes rather than inferring behavior from infrastructure metrics. Futurum Research’s January 2026 Software Lifecycle Engineering Decision-Maker Study (N=393) confirms enterprise procurement already reflects this shift, with AI observability ranking fourth at 37.4% and AI agent observability at sixth at 30.9% among platform selection priorities.

Figure 1: Organizations Prioritize AI and Agent Observability in Platform Selection

“Futurum’s survey of decision-makers confirms what practitioners already know: AI agents require much greater visibility and control for them to operate autonomously, signaling that understanding agent behavior is now a core observability requirement, not a bolt-on feature.

The implication for vendors is clear. Platforms that capture infrastructure telemetry but treat agent decision-making as an opaque internal state will hit a ceiling with enterprise customers. The autonomy organizations grant agents will be bounded by the visibility they have into agent behavior, requiring an observability-native approach that removes that ceiling.” – Mitch Ashley, VP Practice Lead, AI-Native Software Engineering, Futurum Research

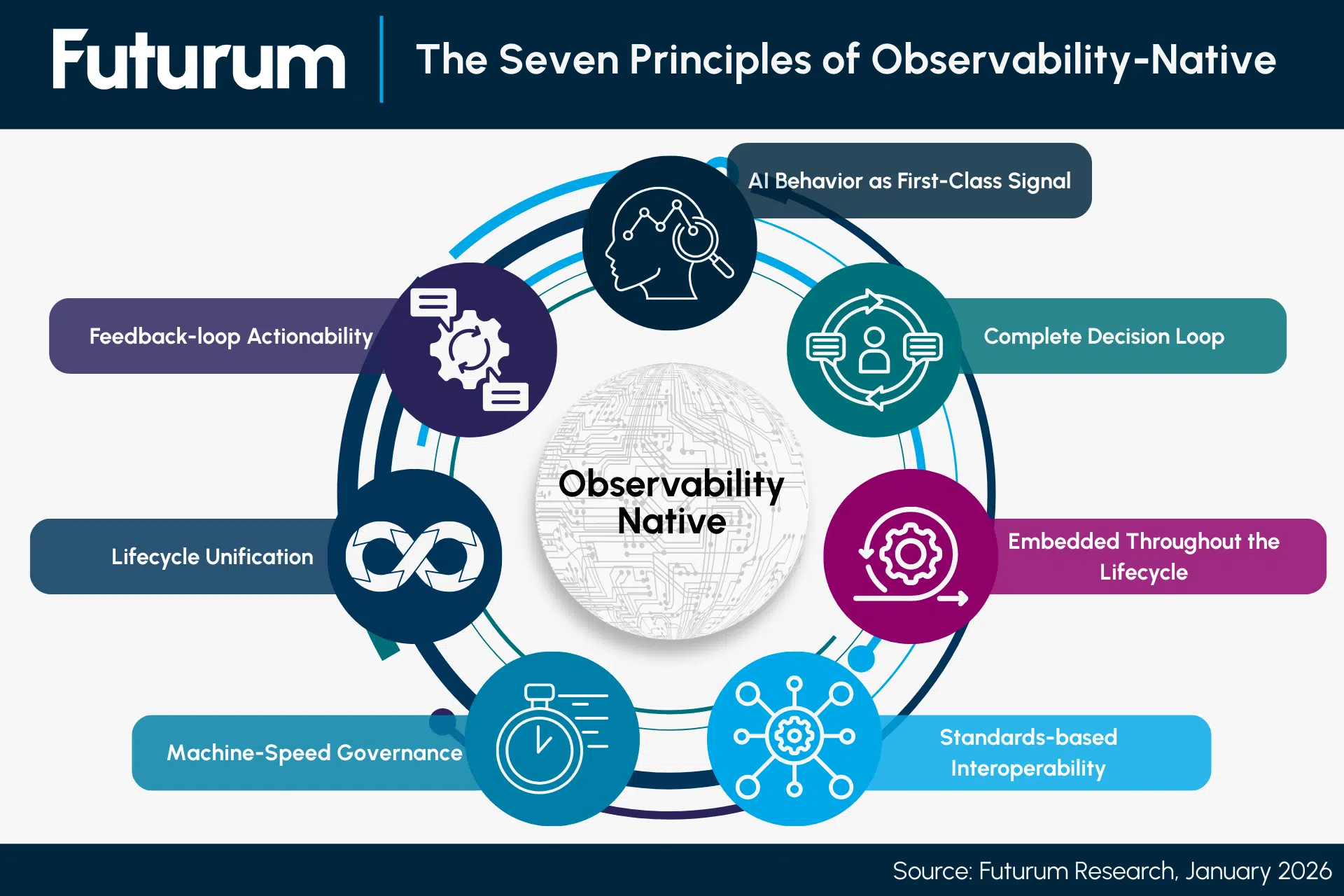

Futurum defines observability-native through seven principles: AI Behavior as First-Class Signal, Complete Decision Cycle Capture, Embedded Throughout the Lifecycle, Open Standards-Based Interoperability, Machine-Speed Governance, Lifecycle Unification, and Feedback-Loop Actionability. Each principle targets a specific failure mode that emerges when traditional observability confronts autonomous agent execution.

Figure 2: Seven Principles of Observability-Native

The Decision Cycle That Defines Agent Trust

The framework centers on a four-stage decision cycle capture. Intent establishes what the agent is trying to achieve. Reasoning traces the path and alternatives considered. The constraints document which guardrails shapes the execution. Outcomes record what changed and what authorization enabled it. Without all four stages, enterprises cannot validate agent operations, troubleshoot incorrect decisions, or demonstrate compliance during audits. This cycle is the real-time signal that enables machine-speed governance.

Autonomy Ceiling and Governance Risk

Enterprises grant agents autonomy only to the degree they can observe and control behavior in real time. Platforms treating agent decision-making as an opaque internal state cap customers at low-risk use cases. As agents take on autonomous deployment and production system modification, observability gaps stop being tooling shortcomings and become governance failures. Boards, auditors, and regulators require auditable records of decision rationale, policy enforcement, and outcomes. Trust in AI systems will be earned through evidence, not assurances.

Conclusion

Observability-native is the architectural prerequisite for enterprise AI agent adoption at scale. The seven principles provide vendors with a design framework and enterprises with a procurement evaluation standard. Organizations that treat observability as an afterthought will find agent programs constrained not by capability but by governance capacity.

Futurum clients can read more about it in the Futurum Intelligence Platform, and non-clients can learn more here: Software Lifecycle Engineering Practice.

About the Futurum Software Lifecycle Engineering Practice

The Futurum Software Lifecycle Engineering Practice provides actionable, objective insights for market leaders and their teams so they can respond to emerging opportunities and innovate. Public access to our coverage can be seen here. Follow news and updates from the Futurum Practice on LinkedIn and X. Visit the Futurum Newsroom for more information and insights.

About Futurum Intelligence for Market Leaders

Futurum Intelligence’s IQ service provides actionable insight from analysts, reports, and interactive visualization datasets, helping leaders drive their organizations through transformation and business growth. Subscribers can log into the platform at https://app.futurumgroup.com/, and non-subscribers can find additional information at Futurum Intelligence.

Follow news and updates from Futurum on X and LinkedIn using #Futurum. Visit the Futurum Newsroom for more information and insights.

Author Information

Mitch Ashley is VP and Practice Lead of Software Lifecycle Engineering for The Futurum Group. Mitch has over 30+ years of experience as an entrepreneur, industry analyst, product development, and IT leader, with expertise in software engineering, cybersecurity, DevOps, DevSecOps, cloud, and AI. As an entrepreneur, CTO, CIO, and head of engineering, Mitch led the creation of award-winning cybersecurity products utilized in the private and public sectors, including the U.S. Department of Defense and all military branches. Mitch also led managed PKI services for broadband, Wi-Fi, IoT, energy management and 5G industries, product certification test labs, an online SaaS (93m transactions annually), and the development of video-on-demand and Internet cable services, and a national broadband network.

Mitch shares his experiences as an analyst, keynote and conference speaker, panelist, host, moderator, and expert interviewer discussing CIO/CTO leadership, product and software development, DevOps, DevSecOps, containerization, container orchestration, AI/ML/GenAI, platform engineering, SRE, and cybersecurity. He publishes his research on futurumgroup.com and TechstrongResearch.com/resources. He hosts multiple award-winning video and podcast series, including DevOps Unbound, CISO Talk, and Techstrong Gang.