Austin, Texas, USA, February 25, 2026

Futurum Survey Finds That Inference at Scale Is the Primary Workload for Only 35% of Enterprises

Enterprise AI techniques have become so specialized that no single workload type is primary for the majority of enterprise decision-makers, according to new research from Futurum.

The balanced distribution across four distinct workload categories—inference, foundation model training, domain-specific training, and fine-tuning—signals that enterprises may reject one-size-fits-all GPU procurement strategies in favor of heterogeneous infrastructure optimized for distinct computational profiles.

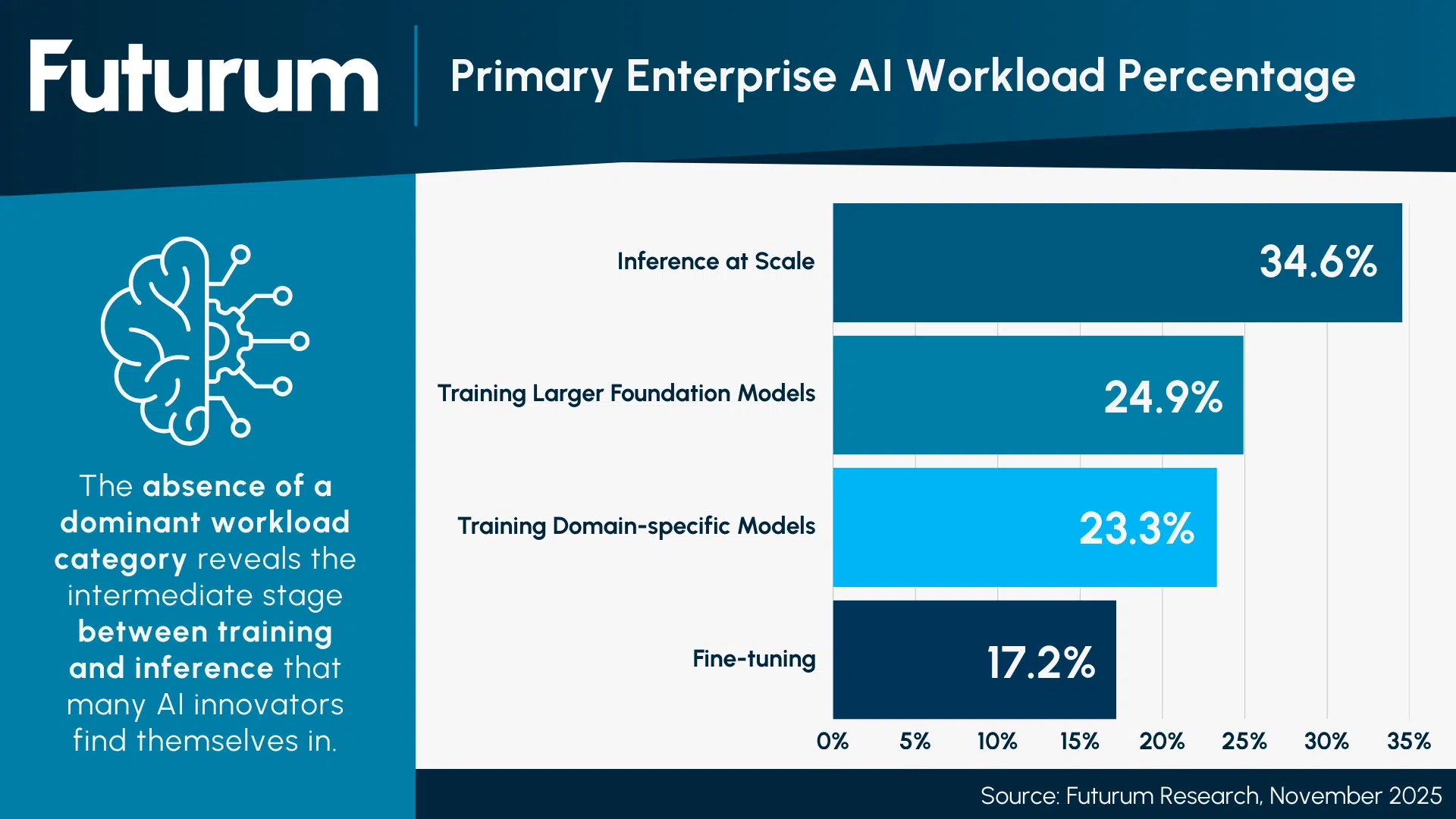

The research surveyed AI infrastructure decision-makers across global enterprises with annual revenues exceeding $100 million, capturing granular workload distribution data for production AI systems. The findings reveal that inference at scale represents 34.6% of enterprise AI compute consumption, training large foundation models accounts for 24.9%, training domain-specific models consumes 23.3%, and fine-tuning existing models utilizes 17.2%. The absence of a dominant workload category reveals the intermediate stage between training and inference that many AI innovators find themselves in.

Figure 1: Primary Enterprise AI Workload Percentage

Brendan Burke, Research Director at Futurum, said, “No single workload standing out as the primary method of enterprise AI shows that there will be a future for workload-optimized silicon. Training foundation models, serving inference, and fine-tuning custom models have completely different performance bottlenecks, and the market is finally waking up to the idea that workload-optimized silicon will deliver better TCO than throwing frontier data centers at every problem.”

The research reveals several key developments shaping the AI software landscape:

- The largest AI clusters for more than half (57%) of organizations are less than 2,048 accelerators

- 38% of organizations primarily access data center compute via hardware capital expenditures

- Leading performance metrics for AI clusters include training speed (31%), $ per FLOP or tokens/second/$ (22%), and FLOPs per watt (16%)

“Fine-tuning and domain-specific model training represent 40.5% of primary enterprise AI workloads combined, yet most accelerator software stacks are optimized for frontier-scale pre-training and inference,” Burke observed, “Fine-tuning workloads have unique memory access patterns and require native LoRA kernel support. Without software innovation for these workloads, compute vendors will underperform on nearly half of enterprise production workloads.”

The research suggests that open-source AI frameworks and model deployment may become leading enterprise investment priorities, with decision-makers citing open-source integration as critical to avoiding vendor lock-in and maintaining flexibility across heterogeneous workloads. To leverage the reasoning capabilities of open-source models, agentic AI deployment is emerging as a distinct fifth workload category that doesn’t neatly fit into training or inference paradigms. Semiconductor and neocloud product roadmaps can benefit from addressing these evolving enterprise needs.

Read more in the “2H 2025 Data Center Semiconductors Global Enterprise Decision Maker Survey Report” and “Q2 2025 Data Center Semiconductor Spot Check Report” on the Futurum Intelligence Platform.

About Futurum Intelligence for Market Leaders

Futurum Intelligence’s Semiconductor, Supply Chain, and Emerging Tech IQ service provides actionable insight from analysts, reports, and interactive visualization datasets, helping leaders drive their organizations through transformation and business growth. Subscribers can log into the platform at https://app.futurumgroup.com/, and non-subscribers can find additional information at Futurum Intelligence.

Follow news and updates from Futurum on X and LinkedIn using #Futurum. Visit the Futurum Newsroom for more information and insights.

Other Insights from Futurum:

Will NVIDIA’s Meta Deal Ignite a CPU Supercycle?

Does Nebius’ Acquisition of Tavily Create the Leading Agentic Cloud?

Microsoft’s Maia 200 Signals the XPU Shift Toward Reinforcement Learning

Author Information

Brendan is Research Director, Semiconductors, Supply Chain, and Emerging Tech. He advises clients on strategic initiatives and leads the Futurum Semiconductors Practice. He is an experienced tech industry analyst who has guided tech leaders in identifying market opportunities spanning edge processors, generative AI applications, and hyperscale data centers.

Before joining Futurum, Brendan consulted with global AI leaders and served as a Senior Analyst in Emerging Technology Research at PitchBook. At PitchBook, he developed market intelligence tools for AI, highlighted by one of the industry’s most comprehensive AI semiconductor market landscapes encompassing both public and private companies. He has advised Fortune 100 tech giants, growth-stage innovators, global investors, and leading market research firms. Before PitchBook, he led research teams in tech investment banking and market research.

Brendan is based in Seattle, Washington. He has a Bachelor of Arts Degree from Amherst College.